What is PCB Data Management?

Table of Contents

Successful design and manufacture of any PCB requires managing data. Every PCB project includes a ton of data on the components, front-end schematics, the physical layout, and manufacturing files. There is other documentation that may be needed that is not captured in your PCB design software. This all has to be tracked and managed by the designer because working with incomplete or out-of-date data will result in a design that doesn't perform as required.

PCB data management encompasses requirements and design information spanning several areas. First, there are functional requirements as to what the final product should do, its specifications and tolerances, and its operating environment. Then there is data associated with each component in various forms (datasheets, stored electronically in a design tool library, etc.). Then there is data for the PCB itself, its materials properties, physical layout, and production requirements. Finally, designs don't always start from scratch, they may require reusing parts of previous successful designs as a starting point.

The critical questions for the designer are:

- Do I have all the data I need?

- Is my design data correct and up to date?

- Has anyone made changes that I don't know about yet?

This article will help guide design engineers in answering these questions, as well as reveal how modern tools are changing data management processes for professional design firms and OEMs.

What is PCB Data Management?

PCB data management is broadly defined as the acquisition, storage, verification, usage, distribution and maintenance of the data used in the design, fabrication, and assembly of a printed circuit board. Data gets created and acquired throughout the progress of a PCB design project, including:

- When generating project and device requirements, SOWs,

- In front-end engineering, when a preliminary design is created and component data is gathered

- During physical design, where mechanical and electrical designs are created in CAD software

- Once designs are transferred to manufacturing, where finalized design data is prepared for production

Design decisions in one aspect of the design force change to other elements, such as the shape of the enclosure changing, which means a PCB component is now too tall to fit inside. Operating environment changes mean the design needs to cope with different ambient temperatures or higher vibration levels. The design of a section of control logic may require the design for the power supply to provide power within different tolerances. The list of possible changes is endless. It is imperative to have processes to manage the data to address any changes.

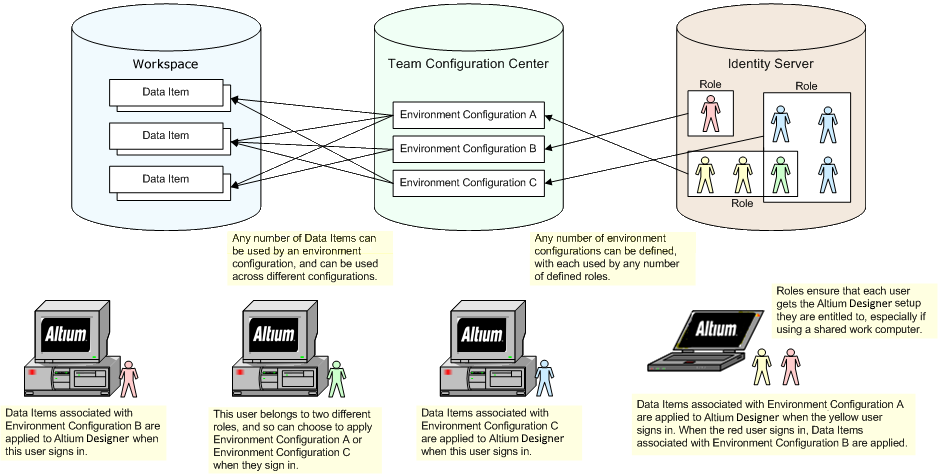

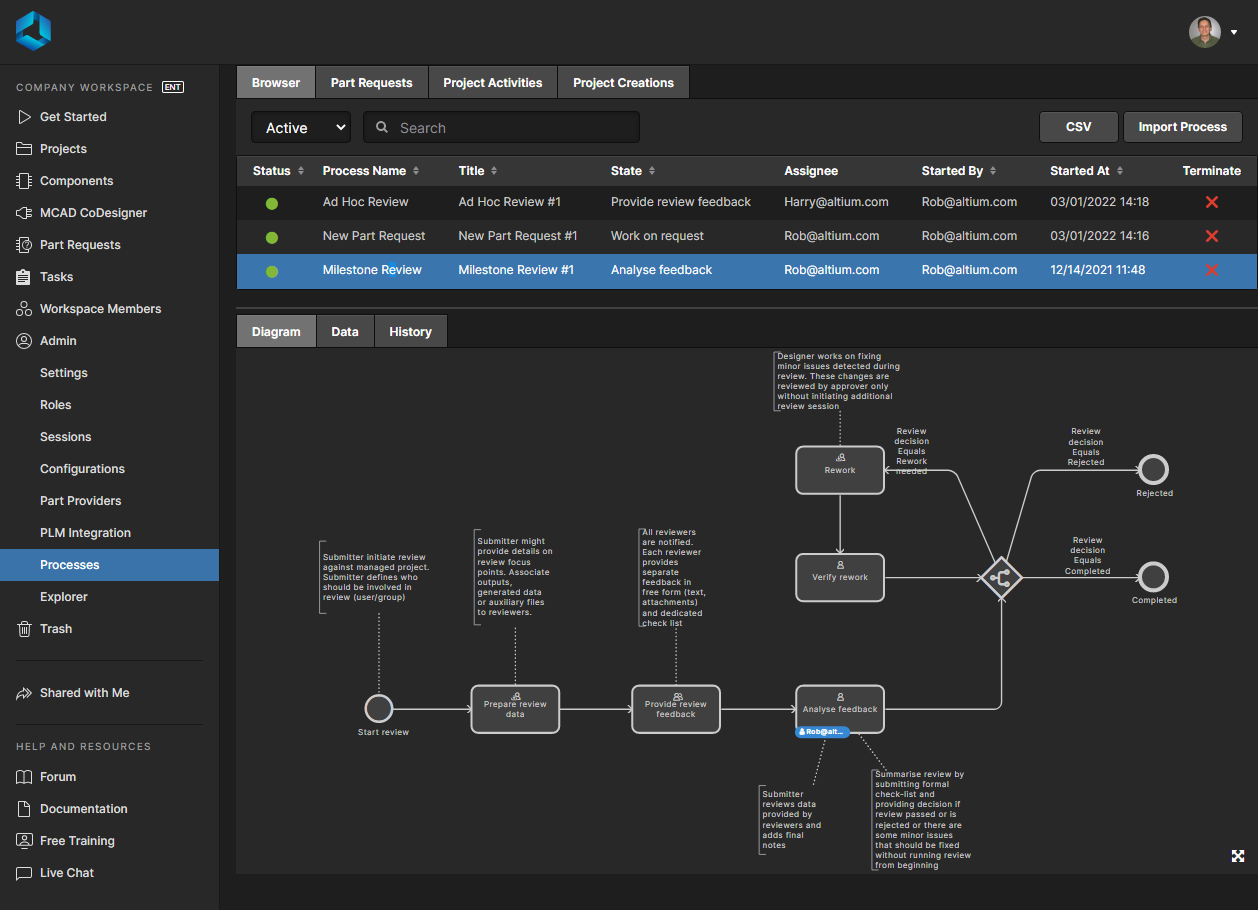

These issues are magnified when there's collaborative work on a new product, whether at the PCB-level or on the mechanical design. For example, processes need to ensure everyone on the design team knows that a specification has changed or that a different component with different physical or electrical properties has been swapped into a design. Tracked changes and new information applied to all data can be visible to everyone on a design team when data is compiled into shared systems such that it can be viewed and accessed by all project stakeholders.

Let’s break this definition down a bit. Before we can talk about the management of the data itself, we should look at what, how, and where we intend to acquire this information. After you’ve been in the PCB industry for any period, you begin to see that much of PCB design is a “cookie cutting” process. The starting point for most PCB designs uses either the same or very similar information and the sources are often universal. Like an acorn that grows to be a mighty oak tree when planted in fertile soil. The initial starting information is also vital to the success of the overall project. I would add that if the starting information for a PCB project is not accurate, then most likely your design will not be accurate either. It is most important to assure that your focus at this point is on the quality of the information rather than the quantity.

Creation and Acquisition of Data

Data will be created and compiled by all project stakeholders, including the PCB design team, the product manufacturer, external contractors, and the end customer. Such data includes, but is not limited to:

- Front-end engineering documentation, including customer requirements and SOWs

- Basic design data, including schematics and PCB layout information

- Any component models, which could include mechanical parts like connectors

- Datasheets and any other specifications on components, which are provided by the vendor

- CAD data for the enclosure, as well as its material and assembly information

- Manufacturing data and design outputs, such as fab/assembly drawings and data for tooling

- Code for embedded firmware/software

All of this data has to be tracked, and not all of it is created by the PCB design team. The problem is that the data changes throughout the development process. Customers change their requirements. The design team's understanding of the requirements can change with the resolution of ambiguities and the challenge of assumptions.

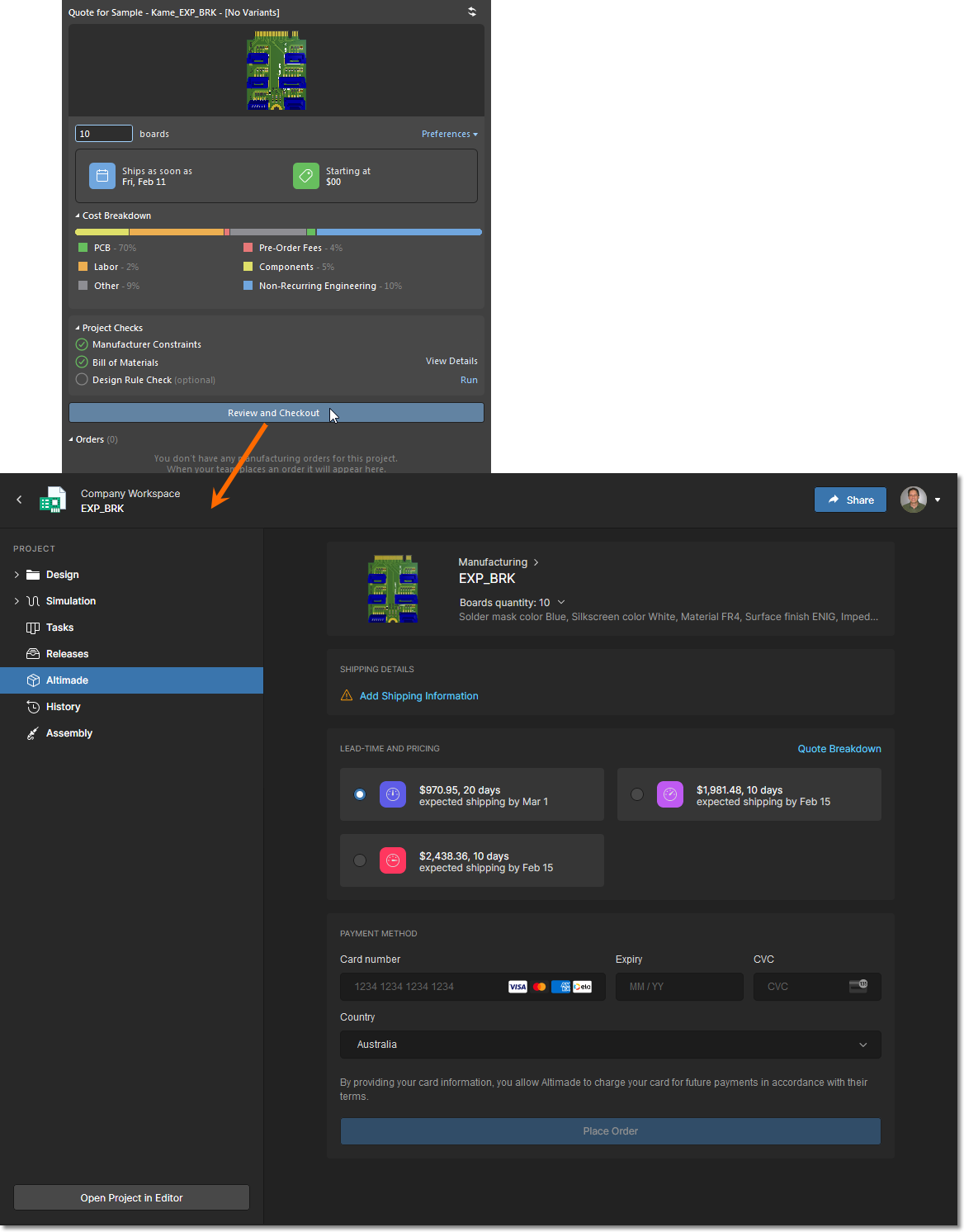

This data management can be even more challenging when you remember not all data is static. For example, while component tolerances and specifications tend to be set in stone, dynamic data such as component prices and lead times can change on a daily basis. Thus many companies implement a centralized PLM/ERP system that integrates with their inventory and supply chain systems. These systems are essentially file storage and sharing systems, but with hierarchy and categorization functions built into them so that changes and new data can be tracked and managed at the project level, file level, or component level.

Component CAD Models and Datasheets

Component datasheets are the most essential documents that must be acquired and verified before you intend to use them. These are the go-to documents for everything moving forward, and because of that, they are a critical item; ergo, they must be correct. I have seen too many instances wherein a design was done entirely on the assumption that a datasheet was correct, only to create problems later because it was not verified. The results were disastrous. “Trust, but verify” should be the mantra of every PCB designer. Depending on where a CAD model was sourced, or what is listed in the datasheet, the footprint may be incorrect as well. This should be verified, which is historically one of the job functions of a PCB librarian.

For component and PCB specifications, datasheets are generally obtained from the manufacturer and taken on trust. Typically there are no announcements when there are revisions to datasheets, so the design team is primarily responsible for checking that they are using the latest version and that there are no notifications of errors in the public domain. Often this comes down to engineering experience and choosing components from trusted manufacturers with an established record of providing correct data. A good tip is for parts available from multiple vendors, the datasheets from each vendor can be compared and differences investigated. Additionally, vendors with a history of datasheet errors can be moved from trusted status to requiring additional verification checks should their products be used.

So, how do we determine the accuracy of a datasheet? A great technique is to use multiple sources to verify the information. Do not rely strictly on a single part vendor source and its datasheet. Look at various sources and vendors for components and pull all the datasheets from each of them. The datasheets can then be compared to verify that they match.

Pro tip: Personally, I would take verification to the next step; I’d monitor the datasheets from those specific vendors and determine if there are any problems with other product datasheets they provide, as they may also have issues.

Storage and Security

The next point is storage. After attaining the data, it is vital to have it stored and protected. This is accomplished by the architecture of your component library. It’s virtually guaranteed that your library will be structured entirely differently than the next guy’s. However, no matter what your component library looks like, a few significant things should be in place.

First, one should quickly be able to find specific components. This is often a direct result of utilizing a naming structure or convention that optimizes searchability. One of the more challenging tasks is finding something in a library which has a mish-mash of naming schemes. An excellent resource for this problem is the latest release of IPC-7251 (Through-hole Components) & IPC-7351 (SMT Components). These standards cover the very systematic structure of how the footprints should be named, and this should be enforced whenever a custom footprint is created for a standard package.

Secondly, you want to have a component library that has an easily expandable architecture. This way, as the company grows and the product lines grow, the library grows with it, as well as the list of verified and qualified components available for design reuse.

Finally, having all the data collated and managed is great until something catastrophic happens that affects all the data. Computers can crash or get hacked, data can be exposed publicly through bad file sharing services, emails might be deleted or corrupted, all of which creates risks for exposing, losing, or corrupting intellectual property.

You can accomplish all of these objectives by organizing your component list by category and family in a single secure location. Modern cloud platforms make these tasks easy. You can enforce security and privacy in your stored data while also grouping data into required categories to aid verification and distribution.

Finally, distribution of data to particular people is crucial and it is an important part of security in your design process. One way to look at this is to see that data usage is internal to the organization and distribution is external. When working with the distribution of any data, always keep in mind that security is vital. The two big “packages” that come from the data and the design process are the fabrication and assembly information. The standard rule is that these two are sent in opposite directions and should never meet. Because with both packages, an unscrupulous person could use them to reproduce your design.

Verification

The first rule of verification is that the person who conducted the work should not also be the person to verify it. The person who conducted the work is often blind to their own mistakes, and the result is they will usually miss them again in the verification step. It is always best practice to use a second person who can look at the data with a fresh set of eyes.

The verification process is an audit process. While components or data are under review, they should be quarantined so that they’re not used in released designs. Let me clarify that point; before the PCB design is released for Fabrication, conduct the Audit process on all new components. This way you preserve the PCB design integrity as well as your sanity.

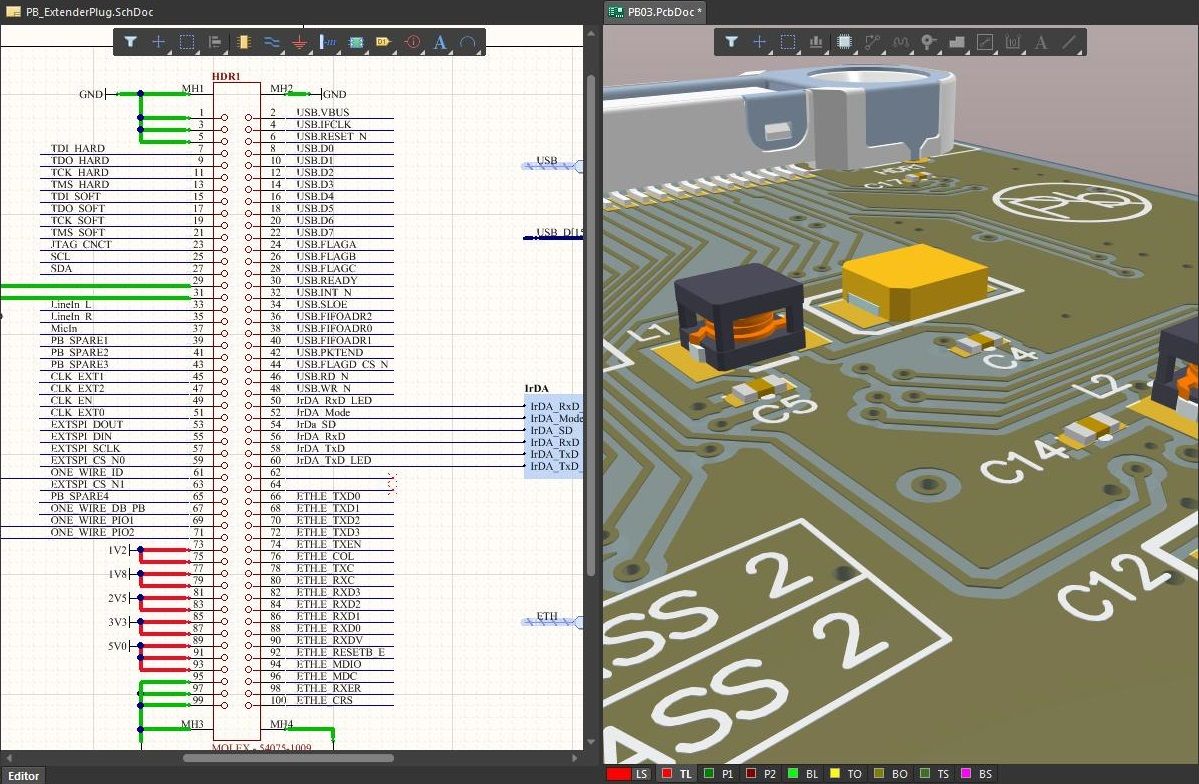

During the PCB design process, you use specific information at particular points of the design. For example, during the schematic part of the design, you are specifically looking at the circuit flow and connectivity. What is most important is the component schematic symbols. For example, the Electronics Engineer would be looking at the parametric information regarding a specific part - making sure that it meets the sought after design requirements for the product.

On the PCB side, you would move over to the PCB Footprint and 3D model information data. The data is used to verify routing and mechanical requirements. This is also the point when a mechanical engineer would join the party and start his or her work.

Maintenance and Version Control

The final area when looking at the definition is maintenance, revision history tracking, and version control. Since most data used in a PCB project is dynamic (vs. static), the data does change. That means there must be a plan in place to determine what changes have occurred and how they affect the update process in the database. This way, your data stays relevant to the company’s needs as well as the ever-changing electronics industry.

One challenge is that PCB design datasets are typically vast and contain incompatible elements. The data contained in electrical computer-aided design (ECAD) and mechanical computer-aided design (MCAD) tools are rarely compatible with each other, let alone compatible with the data in requirements management tools. Effective data management requires a single integrated solution that can link tools together with managed libraries of data seamlessly and effortlessly from the design team's perspective.

Any solution needs to collate data, both static and dynamic, from diverse sources into a single source of truth that designers can rely on being up-to-date and accurate. Automating this process to minimize data management overheads and eliminate human error is essential. This requires connecting to the supply chain, enforcing a source of verified component data, and implementing an integrated version control system in the design process.

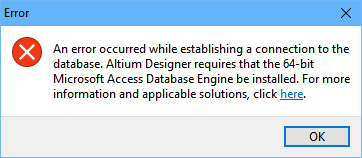

Adopting a solution like Altium to provide a unified data management platform for the development process is an excellent foundation for effective data management processes applied to all of your design data. But there is still work to do in creating automated processes around the development lifecycle to eliminate human error and ensure the validity of the dataset. Monitoring dynamic data for changes and managing updates to the dataset can be an effort-intensive activity that is an ideal candidate for automation, given the potential consequences of using erroneous or out-of-date data.

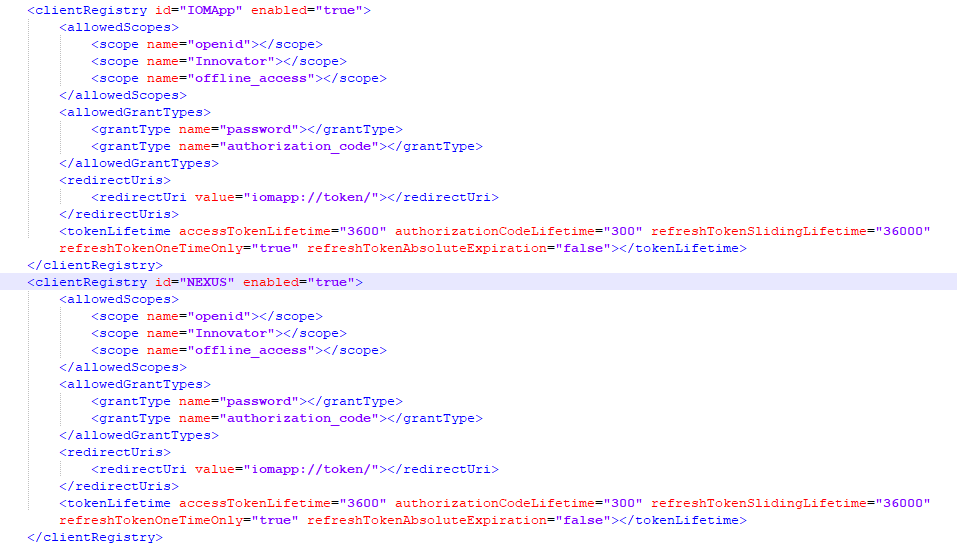

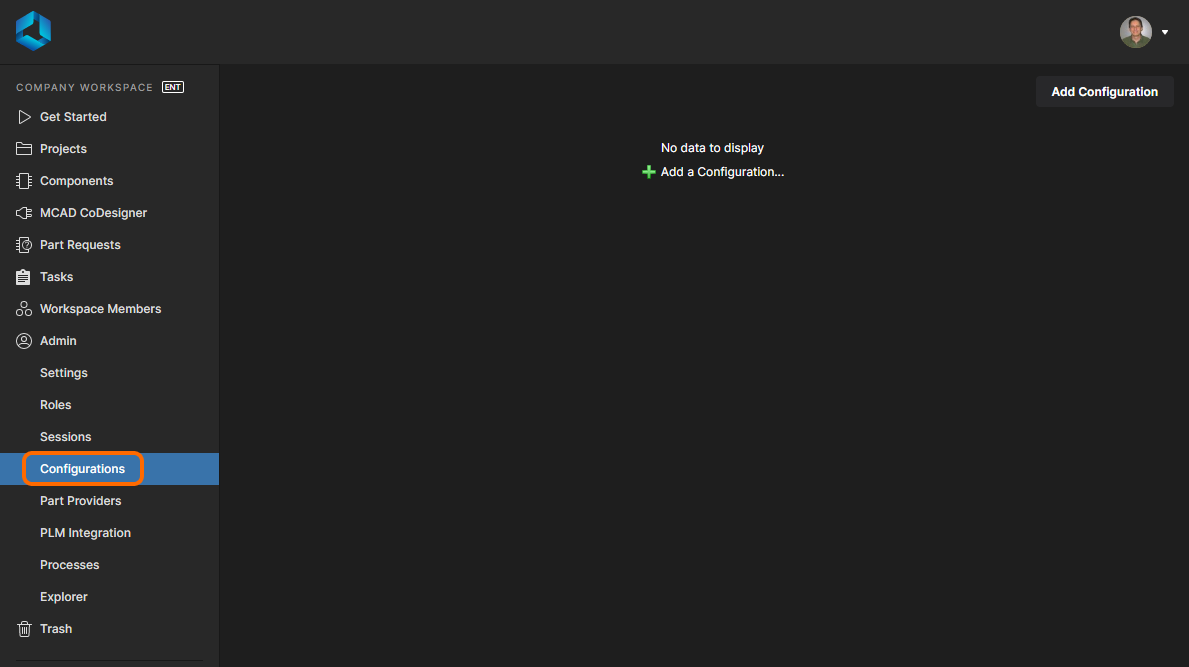

When you need to manage data from your Altium Designer® projects, you can use the integrated library and database features to track proprietary components and projects in the Altium 365™ platform or your on-premises Altium Concord Pro instance. This unique data management system also tracks your manufacturing data for each project revision. You'll also have access to the newest component data from major distributors, as well as lifecycle status for components provided by IHS Markit.

We have only scratched the surface of what’s possible with Altium Designer on Altium 365. Start your free trial of Altium Designer + Altium 365 today.