Designing Hardware in a Memory Shortage: A Field Guide for Engineers and Sourcing Teams

In part one of this series, How AI Broke the Memory Market, we looked at how AI data center demand turned memory into a bottleneck and why DRAM and NAND prices are unlikely to normalize quickly. Now we’ll explore how to operate in this environment. If you’re designing or sourcing hardware in 2026, you still need to make choices: which parts to spec, how to structure your designs for flexibility, and how to manage supply chain risk.

We'll cover “next-wave” memory components that are in the pipeline, then move on to some workhorse DRAM and flash components. From there, we'll lay out practical playbooks for both engineering and procurement.

For a broad exploration of memory components, Octopart's category pages for memory ICs and flash memory are good starting points for searching across manufacturers, packages, and availability.

Key Takeaways

- Know what's coming vs. what's available. Next-wave parts like LPDDR6 and HBM4 signal where platforms are headed, but your 2026 designs will ship on DDR5, LPDDR5X, and mature NAND that's in stock today.

- Design for substitution and flexibility. Standardize on mainstream interfaces, qualify part families, and support multiple densities in firmware. Use sockets and modules where possible, and plan down-binned memory options that still meet UX targets.

- Approach supply risk like an engineering problem. Build multi-source AVLs, lock allocations for critical lines, and track lifecycle and alternates with tools like Octopart.

Next-Wave Components That Set The Direction

Samsung’s LPDDR6 Mobile DRAM

Designed for on-device AI, automotive, and next-gen mobile and PC platforms, Samsung’s LPDDR6 delivers meaningful efficiency gains over LPDDR5X, expanded I/O architecture, and an initial speed of up to 10.7 Gbps, with the LPDDR6 standard designed to scale further as the ecosystem matures. You won’t see LPDDR6 on distributors’ shelves yet, but if you design around leading SoCs or flagship devices, you should expect to encounter it.

HBM4

At the top of the stack, SK Hynix's 16-layer, 48 GB HBM4 devices promise more than 2 TB/s bandwidth, with mass production targeted around Q3 2026. Samsung is taking a different approach, using 4 nm logic and 1c DRAM to improve thermal performance. Engineers working on AI hardware won't typically source these from catalog distributors, but HBM4 matters to everyone because it's absorbing a large share of advanced DRAM capacity, which is one reason conventional DRAM remains tight.

Samsung 10th-Gen V-NAND

With over 400 layers and a 5.6 GT/s interface, Samsung’s 10th-generation V-NAND targets PCIe 5.0 and future PCIe 6.0 SSDs for data-center and AI-class workloads. Expect high-density TLC based on this silicon to underpin many enterprise and high-end client drives over the next several years.

Kioxia/Sandisk BiCS10 NAND

This 332-layer BiCS10 with a Toggle DDR 6.0 interface delivers 4.8 Gb/s per pin, targeting AI and hyperscale storage. According to EE Times, Kioxia has said its entire 2026 NAND output is already sold into AI-related applications, and it pulled its BiCS10 ramp forward from 2H 2027 into 2026 to meet demand.

Less-Constrained Workhorse Memory Products

These parts were available to order from major distributors in early March 2026. Availability is shifting quickly, so verify stock and lifecycle status on Octopart before you lock a BOM.

- Apacer D22.31491S.001, 8 GB DDR5-4800 SO-DIMM. A practical “late-bind” DRAM option for designs that can use a socketed module, which gives procurement more leverage during substitutions.

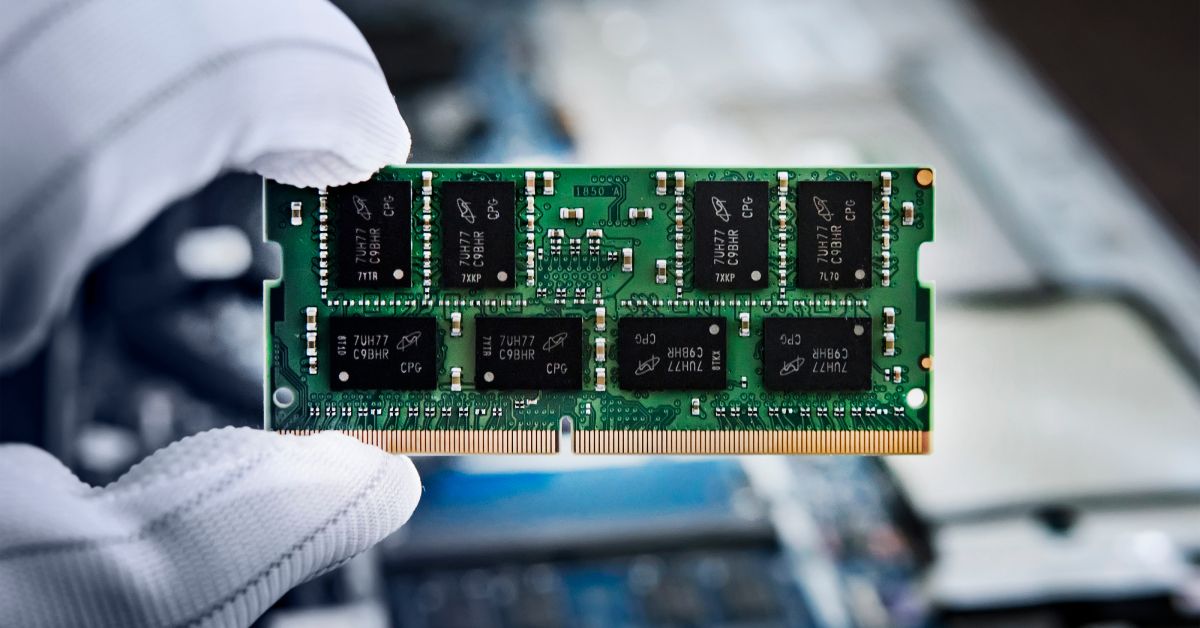

- MT60B2G8RZ-56B IT:D, 16 Gbit DDR5 SDRAM (2G x 8), 78-ball VFBGA. A mainstream x8 DDR5-5600-class DRAM IC that fits custom board-level memory designs and supports more practical second-source flexibility than a one-off module SKU.

- Macronix MX30LF4G28AD-XKI-TR, 4 Gbit SLC NAND (VFBGA-63). A good fit for industrial and embedded NAND designs that need endurance and predictable behavior in a compact BGA footprint.

- Macronix MX60LF8G28AD-TI-T, 8 Gbit SLC NAND (TSOP-48). A practical choice when you need a widely supported parallel NAND footprint for mature controller ecosystems and easier board rework than fine-pitch BGAs.

- Macronix MX52LM04A11XSI, 4 GB eMMC 5.1 (BGA-153). A straightforward managed-NAND option when you want fewer controller dependencies and cleaner substitution than raw NAND plus a custom flash stack.

- Macronix MX52LM08A11XVW, 8 GB eMMC 5.1 (BGA-153). A practical capacity point for many embedded Linux and HMI-class systems, with the same interface and integration advantages as smaller eMMC parts.

- Micron MT40A2G8SA-062E:F, 16 Gbit DDR4 DRAM (2G x 8). Still a high-volume workhorse for many platforms and a pragmatic “ship-now” option when DDR5 is not required.

Design Playbook: How Engineers Build In Flexibility

Against this backdrop, there are still plenty of actions hardware engineers can take to make designs more resilient.

- Standardize on mainstream interfaces and families. DDR5, LPDDR5X, e.MMC, UFS, and SPI/QSPI flash have deep ecosystems and many second sources. Staying within common voltages and packages maximizes the pool of compatible parts.

- Build flexibility into firmware and memory maps. Avoid hard-coding a single DRAM density or SPI-flash size. Support multiple geometries in your initialization code so alternates can be swapped in.

- Favor managed non-volatile memory when it fits. e.MMC and UFS hide NAND management details behind stable interfaces and often have clearer substitution paths than raw NAND tied to a specific controller.

- Plan for down-binned variants. Design your software so that lower-memory configurations still deliver acceptable user experiences, perhaps by using lower default concurrency, smaller asset sets, or feature tiering.

- Use modular memory and storage where possible. Sockets for SO-DIMMs, UDIMMs, and M.2 SSDs allow late-binding of configuration and give procurement more leverage. Reserve soldered-down memory for constrained form factors where it's truly required.

Sourcing Playbook: How Procurement Can Manage Risk

The situation demands your attention. In late February 2026, Lenovo warned channel partners to place orders before the end of the month to beat March price hikes, while TrendForce projected blended PC DRAM (DDR4/DDR5) would rise 105–110% quarter-over-quarter in Q1 alone. The playbook below reflects this new reality.

- Lock in allocations and long-term agreements for critical DRAM and NAND lines, especially for servers, AI boxes, and high-end notebooks. Market intelligence from firms like TrendForce can guide when to commit.

- Build approved vendor lists around families, not individual SKUs. Define acceptable classes of modules, NAND, and e.MMC, and work with engineering to validate several options up front.

- Segment products by memory sensitivity. Direct scarce, expensive memory to SKUs where it most affects performance and margin; apply more aggressive cost controls to less memory-sensitive devices.

- Use memory inventory as a strategic hedge for long-lifecycle products. Carrying a buffer of key DRAM or NAND can be cheaper than redesigning boards or rewriting firmware mid-life if a part becomes constrained.

Flexibility Is the Strategy

In the first part of this series, we covered the why behind the memory crunch. And here we’ve explored the what now. The answer is the same whether you're an engineer or on the procurement side: flexibility is the best hedge. Design for substitution, qualify broadly, and use tools like Octopart to keep your options visible and up to date. The teams that come through this cycle in the best shape will be the ones who built optionality into their designs and supply chains early and keep adapting as supply and pricing evolve.

Frequently Asked Questions

Why is DRAM and NAND still hard to source in 2026?

The current shortage is driven by wafer allocation, not technology limits. Memory vendors are prioritizing high-margin AI demand, especially HBM and data-center DRAM, under multi‑year contracts. Because HBM consumes significantly more wafer capacity per bit than conventional DRAM, less capacity remains for DDR5, LPDDR, and NAND, keeping availability tight.

Should engineers design with next-generation memory like LPDDR6 or HBM4 today?

LPDDR6 and HBM4 signal where platforms are headed, but most 2026 products will ship on DDR5, LPDDR5X, and mature NAND that’s available now. Engineers should design with forward compatibility in mind while selecting parts that can be sourced reliably during production, rather than betting on parts that aren’t yet in distribution.

How can hardware designs be made more resilient to memory shortages?

Resilient designs focus on flexibility and substitution. This includes standardizing on mainstream interfaces, qualifying multiple densities and vendors, avoiding hard-coded memory assumptions in firmware, and using sockets or modules where possible. Supporting down‑binned memory options ensures products can still ship when higher-capacity parts are constrained.

What is the best way for procurement teams to manage memory supply risk?

Procurement should treat memory as a strategic resource, not a commodity. Best practices include locking long-term allocations for critical SKUs, building AVLs around families rather than single parts, monitoring lifecycle and alternates with tools like Octopart, and selectively holding inventory for long-lifecycle products to avoid forced redesigns.