How AI Broke the Memory Market: Inside the 2024–2026 DRAM & NAND Crunch

Key Takeaways

- AI data centers have become the primary customer for memory silicon, driving changes to wafer allocation across DRAM, HBM, and NAND simultaneously.

- This supply-demand cycle is different. Limited fab expansion, mostly sold-out NAND production, and multi-year HBM contracts mean the shortage will likely persist into late 2027–2028.

- Legacy and embedded designs are collateral damage. DDR3, early DDR4, and SLC NAND face rising EOL risk, longer lead times, and unpredictable pricing as vendors prioritize high-margin AI memory.

Memory’s Plot Twist: From Background Part To Bottleneck

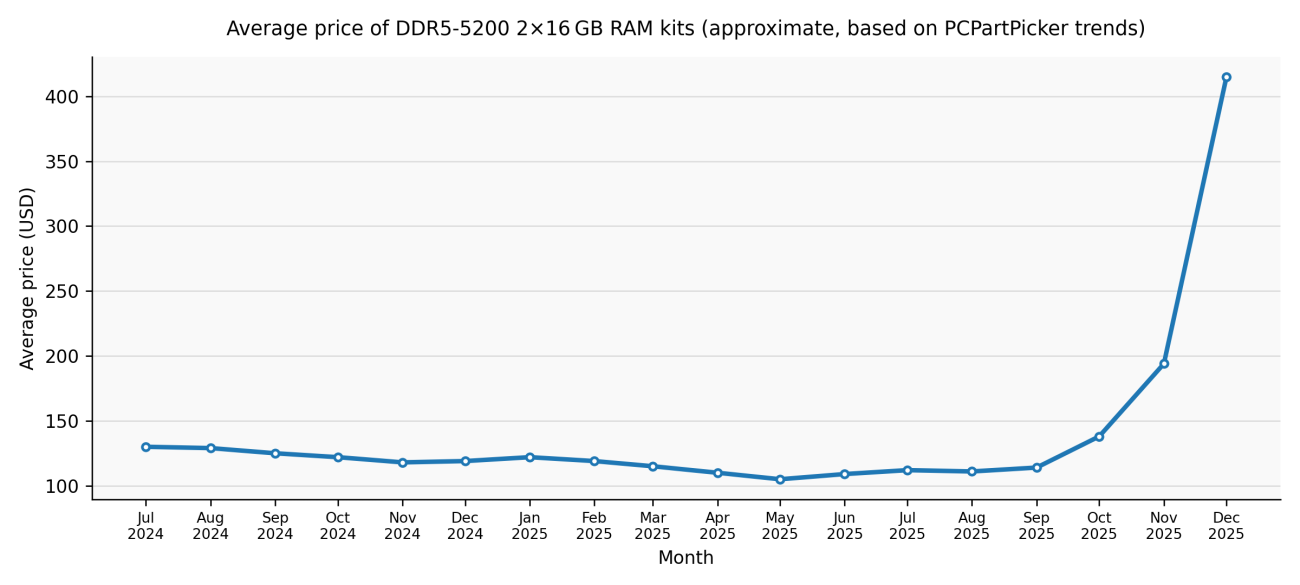

For most of the PC era, memory was in the background. Between 2024 and 2026, that dynamic flipped. Memory became the binding constraint on system design, and the cost of "just adding more RAM" climbed sharply in just a few quarters.

Prices have climbed, availability has tightened, and products increasingly ship with the bare minimum of memory rather than the comfortable headroom we’re used to. There’s a structural rebalancing underway of who gets the wafers and why.

So what actually changed, and why does this shortage feel different from the previous ones? We'll unpack that here, in the first of a two-part series covering the forces disrupting memory supply from cloud servers down to embedded systems. Part two, Designing Hardware in a Memory Shortage, builds on this with a deep dive into next-generation memory components just coming online, leading workhorse products you can order from your distributor today, design patterns, and sourcing tactics.

How AI Data Centers Rewire the Demand Landscape

In previous cycles, memory demand was broadly distributed across PCs, phones, servers, and consumer electronics. Supply and demand drifted out of sync, prices spiked or sagged, and then things normalized as fabs adjusted. The 2024–2026 shortage isn’t following this script.

The difference is who's buying. AI-centric data centers now dominate the demand picture, and their training clusters and inference farms need enormous amounts of high-bandwidth memory (HBM) and conventional DRAM per GPU or accelerator. HBM consumes significantly more wafer capacity per bit than standard DRAM, making it extremely attractive to manufacturers seeking to lock in multi-year, high-margin contracts with AI infrastructure providers.

Some analysts now estimate that data centers will consume up to 70% of all high-end memory chips produced in 2026, a sharp reversal from the era when consumer devices accounted for the majority of such chips. In this environment, PC and mobile memory become a side business, while AI data centers become the main event.

The HBM4 generation unveiled at CES 2026 illustrates the scale of this shift. SK Hynix showed a 16-layer, 48 GB device that delivers more than 2 TB/s, significantly increasing the performance of early HBM3 used in first-wave gen-AI accelerators. Every wafer that goes into these stacks isn't making DDR5 for your next PC or LPDDR5X for a phone.

Embedded and Legacy Designs: Squeezed from the Side

Embedded and industrial designs, which often rely on older DRAM standards or mature SLC NAND, are facing their own headwinds. Many of these products use DDR3 or early DDR4 devices, along with parallel NAND flash, that are no longer at the center of vendor roadmaps.

As manufacturers prioritize high-margin HBM and server-grade DRAM, they’re reducing or discontinuing legacy lines. What remains carries unexpectedly high prices and longer lead times, even though the technology itself is mature.

Keeping close tabs on part lifecycle status with tools like Octopart helps teams spot EOL announcements and tightening supply before they become emergencies.

Progress Under Pressure: DDR5, LPDDR6, NAND, and HBM4

The same technology transitions that are starving older designs of supply are producing genuine engineering breakthroughs. Understanding both sides of this dynamic is relevant because advances are changing what's available to design with, while wafer economics behind them explain why commodity memory won’t be getting less expensive anytime soon.

DRAM

Samsung is mass-producing the thinnest 12nm-class LPDDR5X DRAM for next-gen mobile devices, combining high performance with power efficiency and thin packaging suited to premium phones and ultra-portables. Early LPDDR6 parts push bandwidth and energy efficiency further still, targeting on-device AI and automotive applications. Samsung's LPDDR6 implementation has been picking up recognition at industry events, signaling where high-end mobile memory is headed.

HBM

At the HBM end of the spectrum, the CES 2026 coverage of HBM4 shows that memory stacks are becoming highly integrated subsystems. SK Hynix’s 16-high stacks use MR-MUF and ultra-thin DRAM wafers to stay within JEDEC height limits, while Samsung is looking toward its 4 nm logic (which began mass production in February 2026) to improve thermals and energy efficiency. All of that engineering effort and wafer capacity is squarely targeted at AI accelerators.

NAND

On the NAND side, vendors are stacking ever more layers. 10th-generation V-NAND with 400+ layers and interfaces around 5.6 GT/s is being designed into PCIe 5.0 and future PCIe 6.0 SSDs for data-center and AI use cases. Kioxia and Sandisk’s 10th-generation 332-layer BiCS NAND, using the Toggle DDR 6.0 interface at up to 4.8 Gb/s per pin, demonstrates how far high-bandwidth NAND has progressed for data-center and enterprise-class SSDs.

The technology is advancing, but capacity isn't keeping pace. According to EE Times, Samsung and SK Hynix cut NAND wafer output in 2024–2025 as they chased HBM and DRAM, and have not announced new NAND capacity despite controlling more than half the market. Omdia’s data shows Samsung’s NAND wafers falling from 4.9 million (2024) to 4.68 million (2025), and SK Hynix’s from 1.9 million to 1.7 million.

At the same time, NAND has become critical to AI inference. As AI moves from training to serving, SSDs backed by high-layer NAND are increasingly the main store for model weights and working data. Kioxia’s management has said that its entire 2026 NAND production is already sold out, that BiCS10 is being pulled forward from 2H 2027 to 2026, and that, in the future, nearly half its NAND demand could come from AI applications. NAND specialists like Kioxia and the newly independent Sandisk business, once viewed as underdogs in a commoditized market, are suddenly positioned as winners of the AI-SSD boom.

Why the Usual Recovery Isn't Coming

Industry analysts point to relatively modest DRAM and NAND supply growth through 2026 compared to historical norms. Meanwhile, the demand isn’t letting up. New model architectures, inference workloads, and edge-AI deployments keep pushing memory requirements upward rather than letting them plateau. HBM4 suppliers are dedicating substantial wafer capacity to Nvidia's and other accelerators' requirements, and as we mentioned in the previous section, NAND vendors like Kioxia are sold out for 2026.

In December 2025, Micron demonstrated the structural nature of the shift by announcing its exit from its Crucial consumer business to better support “larger, strategic customers.” Some suppliers, including Micron, have publicly stated that they do not expect the RAM shortage to ease materially for consumers until around 2028, when new capacity and process transitions are scheduled to fully ramp up. The same logic increasingly applies to NAND: AI inference is locking up future SSD-class supply as fast as vendors can bring it online.

In December 2025, IDC characterized the shortage as “not just a cyclical shortage but a potentially permanent, strategic reallocation of the world’s silicon wafer capacity.” In February 2026, TrendForce revised its Q1 2026 conventional DRAM contract price forecast sharply upward, from a prior estimate of 55–60% to 90–95% quarter-over-quarter. Within that, PC DRAM (blended DDR4/DDR5) was projected to rise 105–110% QoQ, a new quarterly record.

What’s Next: From Understanding To Action

In Designing Hardware in a Memory Shortage, we look at seven next-wave memory components ramping into OEM and data-center designs, eight workhorse DRAM and flash products readily available from major distributors, and concrete playbooks for working within these constraints.

Frequently Asked Questions

Why is there a memory shortage even though DRAM and NAND technology keeps improving?

The current shortage is not driven by technology limits but by wafer allocation economics. A growing share of global memory wafer capacity is being redirected toward high-margin AI memory, especially HBM for data-center accelerators. Because HBM consumes significantly more wafer area per usable bit than conventional DRAM, every wafer committed to HBM production reduces output of DDR4, DDR5, LPDDR, and NAND. With limited new fab expansion and long-term AI supply contracts locking in capacity, improvements in memory density do not translate into increased availability for mainstream or embedded markets.

Why does this memory shortage feel different from past cycles?

Unlike previous boom-bust cycles, this shortage is shaped by structural demand concentration rather than temporary overconsumption. AI training and inference workloads continue to scale memory requirements upward, while suppliers have deliberately constrained capacity growth. Multi-year HBM contracts, sold-out NAND production for 2026, and explicit supplier guidance pointing to relief only after 2027–2028 mean this is a long-duration reallocation, not a short-term imbalance that will self-correct through pricing alone.

What risks does this create for embedded and legacy designs using DDR3, early DDR4, or SLC NAND?

Legacy memory products are increasingly treated as non-strategic by major suppliers. As vendors prioritize advanced DRAM and HBM, older process nodes face shrinking production runs, rising minimum order quantities, longer lead times, and higher EOL risk. Even when parts remain technically “in production,” pricing becomes volatile and availability unpredictable. For embedded teams, this raises the importance of lifecycle monitoring, multi-sourcing, and redesign planning much earlier in the product lifecycle than in past generations.

When should engineers expect memory pricing and availability to normalize?

Based on supplier statements and analyst forecasts, meaningful relief is unlikely before late 2027 or 2028. New capacity additions, process transitions, and expanded packaging lines for HBM and advanced NAND are planned, but they take multiple years to come online. At the same time, AI inference workloads are expanding demand for both DRAM and SSD-class NAND, absorbing much of that future capacity. Engineers should plan designs under the assumption that memory will remain a constrained, high-impact system cost for the remainder of this decade.