Autorouting, or No Autorouting? A History of Failed Design Automation

Welcome to the world of electronics. It’s 2016, and we’re now seeing more technological sophistication than in any other period in human history. Just this year alone autonomous vehicles have began their introduction into the public sphere, rockets are being re-landed from space for reuse with finely tuned precision, and Moore’s law continues to prevail in its endless growth trajectory. But there’s just one thing missing in all of this technological advancement, a decent PCB autorouter comparison.

The Real Problem with Autorouters

Although PCB autorouters have been around as long as engineers knew what CAD stood for, designers involved in creating a dense PCB layout have almost entirely ignored the implementation of this automation technology, and rightly so. Autorouting algorithms haven’t changed much since they were first introduced.

When you couple stagnating technology with EDA vendors who provide autorouting technology with varying degrees of performance and configuration setups, it’s no wonder that autorouters haven’t caught on. This technology that was meant to save engineering time and enhance workflows simply hasn’t stepped up its game to match the expertise and efficiency of a veteran Printed Board designer. Is this really all that autorouters have to offer?

The Early Beginnings of Autorouting Technology

The first autorouters produced by EDA vendors were characterized by poor results and performance. They largely offered no guidelines or configurations to preserve signal integrity, often adding an excessive amount of vias in the process. To add to the troubles of this early technology, autorouters were also limited to a strict X/Y grid requirement while being layer biased.

As a result of these limitations, board space was commonly wasted, and engineers were left to clean up the mess of an unbalanced PCB layout. The time investment for an engineer to fix a poorly optimized PCB layout from an autorouter often took more time than it would have to route a board manually. Out of the gate, autorouting was not off to a good start.

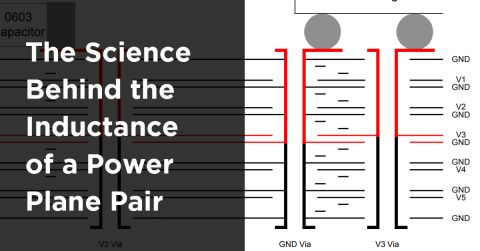

Gridless Autorouting example[1]

Autorouting Advancements of the 80s

As the years progressed, autorouting technology improved only marginally, with quality not keeping pace with a Printed Board designer’s expectations. There was still the issue of mismanaged board layout space, layer bias, and excessive vias. To help aid the advancement of this ailing technology, EDA vendors started to adopt new ground plane components and board technologies to help ease the fulfillment of signal integrity requirements.

If there’s one way to characterize this era of autorouting development, it would be hindrance by hardware limitations. Autorouter algorithms simply couldn’t reduce grid sizes for better routing quality without having to resort to dedicated CPUs and additional memory to support all the required data. Without a hardware-based solution in place, EDA vendors began to explore other avenues including shape-based autorouting schematic capture.

These new shape-based autorouters did help to fulfill board fabrication and signal integrity requirements by:

-

Creating efficient interconnections between components

-

Lowering PCB costs with fewer vias added during the autorouting process

-

Increasing spacing while using less layers on a PCB

Despite these advances, autorouting technology still remained objectively mediocre at best. Despite EDA vendors overcoming hardware limitations, PCB designers still remained skeptical about adopting autorouting design technology.

Maze Autorouting example[2]

The Lackluster Progression of the 90s

Before reaching the new millennium, autorouters had continued to improve with new capabilities including optimized angles, push and shove routing modes, less usage of vias, and even glossing to remove extra wire segments. There were even some efforts made to create autorouting technology that did not have any layer biases.

While all of these new advancements sounded promising, did they make their needed impact on the PCB design community? Unfortunately not. The more that EDA vendors tried to force autorouting technologies onto unwilling PCB designers, the more side effects it produced, including:

-

Increased production of boards with incomplete and poorly optimized routes.

-

Increased autorouting setup complexities that required expert configurations.

-

Increased time spent by PCB designers to fix poor autorouting paths.

The 90s revealed an ongoing trend - when it came to completing real designs, manual routing still remained king.

Shape-Based Autorouting

Will the 2000s Bring a New Hope?

The new millennium arrives and brings with it a plethora of new components and circuit board technologies that causes a shift in how PCBs are manually routed. In most designs, vias now had to be reduced to conserve signal integrity, signals started needing delay/time management, differential pairs started to become the norm for high-speed applications, and the BGA became the preference of many for large pin count packages. This shift in design consciousness gave birth to the age of River-Routing.

The River-Routing method was surprisingly effective, and significantly reduced the number of vias on a circuit board, utilized layers evenly, and had no routing layer bias. Despite these advances, adoption was at an all time low, but why? This time it wasn’t the technology, it was the PCB designer’s mindset. Because PCB designers are constantly routing the board in their mind as they place components, this has a direct influence on how/where are placed which then affects routing implementation. Trying to interrupt this workflow midstream with a River-Routing methodology was a no-go for many engineers.

As an alternative to River-Routing, a new Route-Planning trend arose. This method gave designers a complete toolset to configure autorouting settings, including layer stack definitions, design rule constraints, signal shielding and more. And while all these settings were sorely needed to justify the use of autorouting by a PCB designer, the time spent configuring attributes still took more time than a manual routing process.

Different Methodologies for the Same Goals

Despite all the advances in autorouting technology throughout the past three decades, this technology still remains poorly used by most engineers. Can it really be the technology itself that is the issue, or perhaps it’s a problem with clashing expectations between PCB designers and autorouters?

Typically, PCB engineers take component placement and routing hand-in-hand, often visualizing board layouts at 10,000 feet to identify logical component placement and interconnect points. On the other hand, autorouters tackle this same routing challenge from the bottom up, one interconnect at a time.

For denser board layouts, engineers usually sketch the bus system and subsystem on paper, which they then use as a guide for their manual routing process. And while an engineer is placing components, they are often at the same considering several other variables including delivery dates, design complexity, product costs, and more.

There is of course the dreaded Engineering Change Order (ECO), which can trigger a nightmare-like chain reaction, especially when it affects a complex design area, like a BGA. When it comes to these kinds of tasks, autorouters can be an effective tool only if it can optimize trace escape or fanouts without adding additional vias. And while a good designer can ease the pain of this process with optimized pin assignments, the challenge still remains the same, autorouter or not.

What the EDA Industry Really Needs

Here we are, three decades later and we’re still waiting for an interactive one-click router that instantly translates a desired routing topology into reality. What does the autorouting technology of the future need to include to be taken seriously?

-

Agility. This technology needs to be flexible enough to give PCB designers complete control over routing direction, location and selection, regardless of design complexity.

-

Efficiency. This technology needs to be way more efficient than manually routing a board to ever justify the time using it.

-

Ease. This technology needs to be easy to configure, allowing PCB designers to edit paths as needed.

-

Quality. This technology needs to preserve signal integrity quality while also routing and distributing on multiple layers with no layer bias.

-

Reliability. This technology needs to consistently produce reliable results that can then be manufactured right the first time.

-

Integrated. This technology needs to be integrated with our existing design solutions and connected with our design constraints.

-

Affordable. This technology needs to be affordable and accessible to to every PCB designer if it’s ever going to gain widespread use.

Before

After (Actively Fast)

Printed circuit board designers all over the world are waiting to take autorouting seriously, but the past three decades haven’t left us with much confidence in this technology. Does the future hold the same results? We have something to show you...check out what is coming up in Altium Designer®.

References:

[2] Lee W. Ritchey, Speeding Edge, Copyright Speeding Edge December 1999, and For Publication In February Issue Of Pc Design Magazine. PCB ROUTERS AND ROUTING METHODS (n.d.): n. pag. Web.

Check out our PCB design software, Altium Designer, in action...

Fast and High-Quality Routing