The Future of BOM Management: Trends and Innovations

A traditional static BOM only works if supply is stable, prices drift slowly, and lifecycle events are predictable, but these conditions no longer define the electronics landscape.

In early 2026, major DRAM manufacturers redirected wafer capacity toward HBM and DDR5 to meet AI demand, tightening legacy supply. According to TrendForce and Sourceability analysis, contract prices for DDR4 and other conventional DRAM rose by tens of percent in 1Q26 — with some legacy segments seeing increases of up to 50% — while lead times stretched beyond 20 to 30 weeks.

In such volatility, a static BOM becomes a liability, concealing financial exposure and operational risk rather than controlling it.

Capacity reallocation dynamics now drive:

- Rapid availability shifts

- Nonlinear price spikes

- Shorter reaction windows

Failing to track lifecycle changes – particularly for legacy memory like DDR4 nearing EOL – significantly raises the risk of production delays. Competitive advantage has shifted from design optimization to BOM responsiveness. This shift is amplified by a “capacity loss” effect: wafer starts allocated to HBM consume disproportionate manufacturing resources (with high-stack configurations requiring up to 3x the wafer area of standard DRAM), reducing standard DRAM output even when fab utilization remains high.

As a result, the BOM is evolving into a live, high-frequency decision framework. BOM management is no longer periodic validation, but continuous data-driven control. The most resilient organizations treat their BOM as a real-time sensor for the global supply chain. In this environment, the golden window for securing stock has shrunk from weeks to mere hours as automated procurement bots now strip global spot-market inventory the moment a lifecycle alert triggers.

Key Takeaways

- Static BOMs are a liability in today's volatile supply chain. Dynamic BOM management is now a competitive requirement.

- AI-driven BOM tools are only as effective as the data feeding them. Clean, structured data is the prerequisite.

- Integrated PLM-ERP-MES environments eliminate the latency gap that causes ghost stock and missed sourcing windows.

- Deterministic automation delivers reliable ROI today, while building the data foundation AI will need to scale.

- Companies that standardize BOM data now will be best positioned to survive the next supply disruption.

Why BOM Management Must Evolve

The traditional, manual approach to BOM management is breaking under the pressure of modern manufacturing cycles.

The "Ghost Data" Problem

Manual workflows cause significant error margins, resulting in "ghost stock" where parts seem available on paper but are physically missing. In a 2026 landscape where key components increasingly move to allocation‑only ordering, where distributors prioritize contracted customers, ghost stock can be catastrophic.

The Transformation Mandate

Manufacturing surveys show rapid digital platform adoption to manage shorter innovation cycles and accelerate Engineering Change Orders (ECOs). Time-to-market compression has become a defining performance metric. With consumer electronics and automotive tech cycles now measured in months rather than years, the time lost manually updating a spreadsheet to reflect a single capacitor change can cost a company its first-mover advantage.

The Foundation First

Success in 2026 requires moving BOMs into connected environments to eliminate data latency before layering on advanced automation. Before an organization can claim AI-readiness, it must first solve the latency gap between engineering and the warehouse.

BOMs as the Source of Truth

To achieve resilience, the BOM must become the definitive source of truth across the entire organization, linking design, sourcing, and production.

System Integration

Replacing spreadsheets with integrated PLM-ERP-MES environments synchronizes EBOM and MBOM views while eliminating manual re-entry. Engineers and procurement teams operate from a shared dataset spanning pricing, availability, and lifecycle status, preventing costly disconnects where components approved in design prove unavailable, restricted, or economically unviable at sourcing.

Speed & Inventory ROI

Integrated backbones deliver significant improvements in time-to-market and fewer stockouts by aligning up-to-date demand with engineering data. By leveraging the latest inventory hooks, companies are moving toward continuous planning, where the BOM acts as a sensor for the supply chain, triggering alerts the moment a component’s 12-month trend line indicates a projected shortage.

Embedded Compliance

Connected BOMs provide component-level RoHS, REACH, and ESG traceability, turning compliance into a proactive design constraint rather than a downstream check. With high-risk AI obligations under the EU AI Act phasing in through 2026-2027 and tightening ESG reporting standards, modern BOMs for EU-bound products are increasingly incorporating Digital Product Passport-style attributes, tracking the carbon footprint and labor ethicality of every line item, to ensure compliance before the first prototype is built.

AI BOM Trends vs. Practical Reality

How AI and Machine Learning Are Transforming BOM Workflows

- Predictive Risk & Shortage Scoring: AI models analyze supplier risk indicators and market trends to predict lead-time extensions and shortage risks 90–180 days in advance. In 2026, these models increasingly factor in non-traditional data like port congestion and geopolitical tariff announcements.

- Intelligent Alternate Suggestions: ML-driven engines move beyond simple parametric matching to suggest form-fit-function alternates. In 2026, these engines can evaluate firmware-compatible alternates, suggesting an MCU change that doesn't require a complete software rewrite.

- Anomaly Detection: Machine learning identifies BOM drift, spotting wrong voltage values, package mismatches, or sudden price spikes. This serves as an automated sanity check that catches human error, such as a decimal point error in a voltage rating, before it hits the PCB assembly line.

- Semantic Part Search: Natural language processing allows engineers to search libraries conceptually (e.g., "ultra-low power BLE module for industrial IoT"). This removes the keyword silo where an engineer might miss a superior part simply because it was categorized under a different naming convention.

The AI Readiness Gap: Why Clean Data Comes First

Hallucination risk continues to challenge early AI deployments. Early AI implementations in electronics often suffer from probabilistic hallucinations, where models suggest non-existent part numbers or incompatible alternates due to incomplete training data. In a production environment, even a high-accuracy model becomes problematic if isolated errors introduce supply, qualification, or reliability failures.

This shift is already visible. The global AI-powered supply chain market – already a $10 billion+ sector in 2026 – is accelerating toward an expected $50.41 billion valuation, driven by the very task-specific agents that are reshaping BOM workflows. While analysts forecast rapid growth in task-specific AI agents, these systems are only as effective as the data they consume. Their effectiveness is fundamentally constrained by data integrity so the quality, structure, and completeness of their inputs.

In this year of structured readiness, manufacturers are prioritizing data normalization, unifying data silos, and BOM standardization. The limiting factor is no longer model capability, but data quality. Fragmented, spreadsheet-based BOMs cannot reliably support AI-driven decision-making, and poorly structured inputs risk turning intelligent automation into a source of operational uncertainty.

Deterministic Automation: Reliable Value Today

Before AI can "think," BOM tools must "verify." Deterministic checks – enforcing AVLs, catching duplicate parts, validating units – deliver immediate, measurable ROI without the uncertainty inherent in probabilistic models. In 2026, deterministic automation functions as the referee: every AI‑generated suggestion is evaluated against hard engineering rules before it can be approved.

By anchoring workflows in deterministic accuracy, Octopart provides the high‑fidelity data layer: clean part metadata, authoritative manufacturer records, and centralized historical context that AI systems will ultimately depend on to scale effectively. Octopart establishes the ground truth modern supply chains require, ensuring every match is backed by verified data rather than statistical inference.

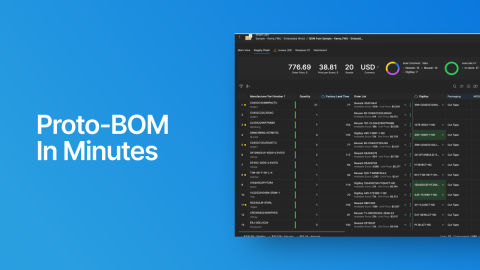

Next-Generation BOM Tool Capabilities

The modern BOM tool is no longer just a viewer but an active diagnostic engine.

- Normalization: Automated import from EDA/PLM systems ensures manufacturer part numbers (MPNs) are standardized, eliminating the dashing problem, where "Part-123" and "Part 123" are treated as two different items.

- Up-to-Date Intelligence: Direct hooks into the latest availability and lifecycle status surface NRND/EOL risks months before they hit the production line. For example, in Octopart you can view a color-coded risk assessment for every line item in your BOM based on global stock levels and manufacturer roadmaps.

- Deterministic Matching: Where generative AI can hallucinate or mis‑match parts, deterministic matching engines like Octopart run against a curated, verified component database. This produces reliable multi‑distributor pricing, validated alternates, and accurate manufacturer linkages. It provides the “practical AI” that works today, automating the tedious matching and price-comparison tasks without the risk of AI-generated errors.

Recommendations for a 2026 BOM Strategy

First, standardize. Audit for spreadsheet silos and prioritize moving to a connected environment. Data hygiene is your best defense against 2026 volatility.

Always leverage the latest data. Implement BOM-level compliance and lifecycle thresholds. Use alerts to manage 2026 regional tariff shifts — like the 25% Section 232 duties on advanced AI semiconductors and derivatives, effective January 15, 2026 — that can materially change the landed cost of a BOM overnight. For global electronic manufacturers, the difference between a profitable run and a loss-leader now hinges on the ability to re-simulate BOM costs against new trade proclamations in minutes, not months.

And last but not least, pilot for ROI. Use Octopart BOM Tool on an active NPI. Compare the manual sourcing time of your last project against an automated Octopart-driven workflow to prove the business case for a full digital rollout.

Clean Data Is the New Gold

The future of BOM management isn't a magic AI button, but a connected pipeline. Octopart provides the practical resilience needed to navigate 2026’s volatility while building the verified data foundation required for tomorrow’s autonomous AI workflows. In the automated age, the companies with the cleanest data and the fastest tools to act on it will be the ones that survive the next memory crunch.

Ready to automate data normalization, lifecycle tracking, and sourcing analysis? Try Octopart BOM Tool today.