DDR5 Speeds—What’s New On The Memory Horizon

Earlier this year, I wrote an article on the various iterations of double data rate (DDR) memory and, specifically, the differences between DDR3 and DDR4. Following the introduction of the first DDR memory back in 1998, successive iterations of DDR have been delivered to the market always to improve two key features: data bandwidth and data speed. DDR5 is now on the horizon, and it offers significant benefits over the previous version, DDR4.

This article will describe the various features incorporated into DDR5, how they compare to DDR4, the key benefits of DDR5 and the design challenges that will be presented as a result of this next iteration of DRAM technology. As with my earlier article, I turned to our third partner in Speeding Edge and one of the industry’s leading experts in IC technology, John Zasio. Once again, John’s assistance in developing this article was invaluable.

Meeting the Standard

As with every iteration of DDR memory, DDR5 and its features and performance characteristics are delineated in the industry standard that maintains control of the technology, “Proposed DDR5 JEDEC Standard No. 79-5.” That standard still has a lot of blank areas surrounding DDR5. Still, a thorough writeup is available in a paper created by Micron® Technology, “Introducing Micro DDR SDRAM: More Than a Generational Update.” That document served as the basis for a fair amount of information that is incorporated into this article. A key thing to remember is that there are no DDR5 products yet available on the Micron website; there are no chips or DIMMs that incorporate DDR5 product features and performance characteristics, and there is no information as to when DDR5 products will be shipped. In my previous article on DDR3 vs. DDR4, the projected time frame for DDR5 products was mid-year of 2021, and that remains a valid prediction.

DDR5 Improvements

Keeping in mind that each successive generation of DDR technology focuses on increased bandwidth and speed, John Zasio explains, “Basically DDR4 went up to 3.2Gb/S, 3200 Mb/S and DDR5 starts there and goes up to 6400 Mb/S. It is significantly faster but what they are claiming is that because of the efficiencies and the way it works, if you run both DDR4 and DDR5 at 3200 Mb/S, DDR5 is 36% faster. I don’t understand how that could be because you always lose some efficiency. For example, with DDR4, you don’t get exactly 3200 Mb/S because there is refresh, switching banks, etc. I think the way DDR5 gets the 36% increase in efficiency is because there are more banks open at one time to switch between things.”

Specifically, in the document published by Micron, the enhanced bandwidth performance of DDR5 is the key benefit, and it is broken down as follows:

- The DDR4 data rate is 3200 MT/s (Megatransfers per second).

- With DDR5, system simulations indicate that there is an approximate performance increase of 1.36X effective bandwidth.

- With the DDR5-4800 product implementation, the approximate, simulated performance increase is 1.87X. This represents a performance increase that is nearly double the bandwidth of DDR4-3200.

Similarly, in terms of data rates, DDR5 offers significant enhancements that are broken down as follows:

- DDR4 spans from 1600MT/s to 3200 MT/s.

- DDR5 spans from 3200MT/s to 6400 MT/s.

Additional benefits of DDR5 include the following:

- On-Die ECC.

- John explains, “This is part of the reason for the increased effective bandwidth. In DDR4 and earlier, the controller needed to read the 72-bit word, correct the single-bit errors, and then write it back to the DIMM. On DDR5, this can all be done on the DIMM in the background.”

- DQ receiver equalization.

- John notes, “This has been used for a long time on high-speed ethernet signals. It is digital signal processing inside the receiver that enables signals to be picked out from lossy lines when there is not a clear eye-opening at the receiver.”

John notes, “There’s an interesting thing in [the Micron document] here about DIMMs. You can operate them in two channels as opposed to the previous one channel configuration. You can use the normal 72 bits, but you can also operate them in two channels of 40 bits each. So, you could run an independent left side and right side of the DIMM and have two streams going simultaneously so that you can get significant amounts of overlap. That’s significantly different.”

He expands on the previous by explaining, “Almost all DDR applications are with microprocessors that have internal caches. When you load a line into the cache while that line is being worked through, the next line is being loaded. A microprocessor cache line is eight words of eight Bytes or a total of 64 Bytes. With DDR, this is four clock cycles. If you use a 40-bit word, this is four Bytes, so it takes eight clock cycles. But, apparently, with DDR5, you can have two things going simultaneously. As a result, you can have one instruction stream and one data stream, or you can have two different data streams. There are some subtleties as to why DDR5 is so much better.”

In many ways, there are some smaller changes from DDR4 to DDR5. For instance, with DDR5, the voltage drops from 1.2 to 1.1. The pulldown drivers in DDR5 are the same as those found in DDR4, but there are some subtle changes in the way they work. As noted in my previous article, there is a mode of operation in DDR4 that is called DBI (data bus inversion). This means that there is something on the controller that looks at the number of zeros and ones in each byte of information going out. Normally, a 1 is high level while 0 is low level. If there are more 0s than 1s, the DBI inverts the word data and sends out a signal saying “this is inverted.” This means that there is never more than half of the wires pulled down at any one time, which saves the peak load.

John notes, “In DDR5 they have moved this operation over to the address and control bus. This method decreases both the power dissipation and the amount of SSN (simultaneous switching noise) that you get. This means that if you go from all 1s to all 0s, at the same clock tick, you will have a much greater current spike than if you go from all 0s to half 0s which is the worst situation you can get.”

One of the things that have not improved dramatically over the many successive iterations of DDR technology is the latency or time required to get data back from memory. “Way back, this rate was 30 nanoseconds, and now it’s down to 15 nanoseconds,” John states. “As the CPU cycles have increased, it takes a lot more of those cycles before you get the data back. You indeed get a lot more bandwidth and you get a lot more data back, but it takes more clock cycles to go out to the memory device and get that data. The speed of that operation is essentially the same in DDR5 as it is in DDR4.”

As is the case with many technology evolutions in the component world, DDR5 is in a smaller geometry semiconductor process than DDR4 which means that the product developer can get a lot more memory in a chip with DDR5. John notes, “As soon as DDR5 is out and available on a regular basis, you’re going to want to move to it. While the memory bits in DDR4 ranged from 2 Gb/s to 16 Gb/s per chip in DDR5, it ranges from 8 Gb/s all the way to 64 Gb/s. That’s a 4X increase in the number of bits which is pretty significant.”

PCB Design Challenges

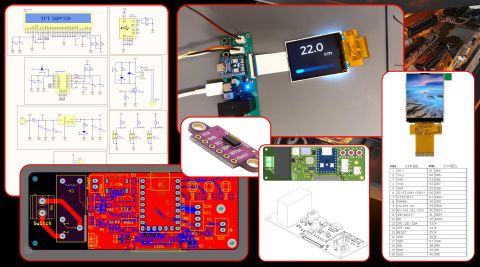

Since there are many similarities between DDR4 and DDR5, the considerations from the PCB design perspective remain the same. But, the expanded capabilities and performance benefits of DDR5 do create some design challenges on the PCB side of things.

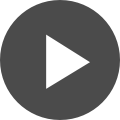

John explains, “Because you have the data rates doubling per wire with DDR5, discontinuities from vias and connectors are twice as important. There’s going to have to be a lot more control over how you design the vias and connectors. It’s almost like designing a 10 Gb/S trace on a PCB. You can’t use long vias stubs, and you can’t do a bunch of stuff that we used to do on lower speed implementations. In using DDR5, we’re going to have to be much more careful and do more simulation when designing the board.”

He adds, “As far as the topology, it’s not very much different. It’s kind of like the same number of wires between the processor and the DIMM.”

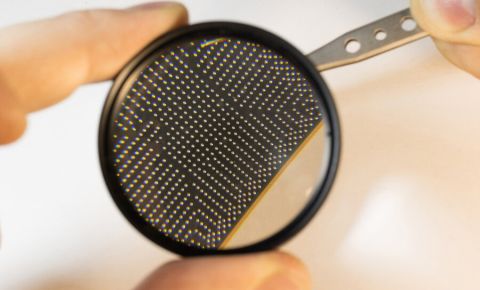

In terms of size, the DDR5 chip has an array of solder balls that are in an .8mm pitch device. John notes, “With an .8mm pitch that’s just on the borderline of what you can drill for holes in a very thick board. But these devices don’t have a solid array of balls. There are three rows of balls and then two rows of empty balls and then three more rows of balls. The balls are sort of on the outer edges of the chip, and there is a lot of free space in the center. You can route traces from the balls over to the holes. When you are doing stuff on a DIMM, it’s a little easier than trying to do it on an 18X24” PCB. You can use smaller drills, and you can use microvias. In terms of PCB assembly I think, you are going to see a lot more done with the laser-drilled microvias because they only go down into the PCB one or two layers and you eliminate the via stub. The impact of a via stub is the big thing at these speeds that can destroy things. However, if you use buffered DIMMs, you are o.k. even on a thick board.”

Summary

The next iteration of DDR technology, DDR5, will be available in the not-too-distant future and it will deliver significant improvements in bandwidth over previous iterations of DDR technology. The increase in data rates with DDR5 is substantial, but because of the small form factor (.8mm pitch package), PCB designers will have to pay closer attention to how the via stubs and connectors on the board are designed.

Have more questions? Call an expert at Altium and discover how we can help you with your next PCB design.