DDR3 vs. DDR4 — Lots Of Memory At Very High Speed

When it comes to memory capacity and how it’s incorporated into the present day and next-generation design, much like every other aspect of high-speed design, it’s all about accessing large amounts of data at very high speeds.

The evolution of double data rate (DDR) memory has been pretty dynamic over the years. The original DDR was first introduced in 1998 followed by DDR2 in 2003, DDR3 in 2007, DDR4 in 2014, and DDR5, which has an estimated introduction date of sometime this year. Obviously, more memory is desirable, but the memory in use needs to provide data faster. DDR4 offers significant benefits, but design considerations have to be addressed at the PCB level to ensure the entire system will work right the first time and every time. This article will provide a comparison of DDR3 vs. DDR4, the challenges associated with them, the different styles and tools used for memory design, application notes, and the expertise necessary to ensure that memory will work as designed.

For this article, I turned to John Zasio, the co-author of our two books, Right the First Time, A Practical Handbook on High-Speed PCB and System Design, Volumes 1 and 2. He has more than 50 years of experience in electrical engineering. His expertise spans a broad range of Printed Circuit Boards (PCB), application specified integrated circuit (ASIC), integrated circuit (IC), gate array, standard cell, high-speed circuit, and power subsystem design topics. John serves as Technical Advisor to Speeding Edge, and we always say that he is to IC design what Lee Ritchey, Founder and President of Speeding Edge, is to board design. John’s contribution to this article was invaluable and very much appreciated.

Overview of DDR3 vs. DDR4

John explains, “Upon leaving IBM in 1969, I went to a company called Mascor. That’s when dynamic random access memories (DRAMs) came out. I remember an Intel sales engineer coming around and telling us about this wonderful device they had that was much better than core memories. It had 1,000 bits. I don’t remember what the speed was, but the sales engineer told us that the only thing we had to worry about with DRAM was that we had to tell it what to remember or it every four milliseconds would forget. This is now known as Refresh. That’s what the ‘dynamic’ in DRAM means. It takes a little amount of charge. You can count the electrons put into a tiny little capacitor, and that bleeds off after some time. So, it has to go back and refresh itself. It reads the small signals that bleed off, and then it goes and puts it back.”

When it comes to comparing DDR3 vs. DDR4, several different factors come into play. These include data rate (the speed at which a machine can read or write data into the RAM), voltage levels (they are lower in DDR4), how often the memory refreshes, the time between the transmission of the command and its execution, the refresh mechanism, the memory capacity, cost, the required memory capacity, and what is the setup point or time when you will need to switch from DDR3 to DDR4 (aka planned obsolescence).

DDR Routing and Speed

John notes, “DDR4 is twice as fast as DDR3. With each generation of DDR that comes out, the speed is increased by a factor of two.”

DDR3 has a data rate that goes between 800 MHz to 2133 MHz. It cannot go above 2133 MHz. For practical considerations, the data rate for DDR3 varies between 1600 MHz and 1800 MHz. The data rate for DDR4 goes from 1600 MHz to 3200 MHz.

“Double data memories have two classes of pins,” John states. “There are address pins (which are typically called address control), and sometimes they are multiplexes such as those found in DDR. You put out a control word, and it gets latched into the memory to set up registers or some other device that will operate. Then, you put out an address, and the clock starts ticking away as the data is transferred.”

“With DDR, the address typically comes out of the controller, and it goes past every memory chip and touches all of them. This results in multiple loads on each wire. The data pins go directly from the controller to each memory chip. Typically, there is one driver and one load on the data pins. This is a much cleaner path. And, because it’s a cleaner path, it runs at twice the speed. There’s a data transfer on the data when the clock rises and a data transfer on the data when the clock falls. This is in contrast to address control pins where there’s only one piece of information per clock period. If you have a 3200 Mbps data transfer at the top end of DDR4, you are dealing with a 1.6 GHz clock.”

John continues, “The good news is that DDR4 is twice as fast as DDR3, but the not-so-good news is that you have to start worrying about transmission line length matching and transmission line quality. This is similar to what we had to do with the fiber-optic connections of 15-20 years ago.”

Additional elements that come into play include the glass weave of the laminate, the all-important PCB stackup, and the inductance of vias. John says, “These are all issues of concern on the board side because of the speed of DDR4. The same kinds of things that apply to a 3, 4, or 5 Gb/s Internet link now start to apply to these data signals. You can no longer buy just a cheap piece of laminate material, put the PCB together, and expect it to work.”

In addition to the foregoing, the voltage levels are larger in DDR3 vs. DDR4 (1.5 V vs. 1.2 V, respectively).

John explains, “A bigger issue, aside from the change in voltage level, is that in DDR3 there is a push-pull driver. This means the terminator on the DIMM (dual inline memory module), or on another location, tended to be the equivalent of a 50 Ohm resistor to 0.75 V, which is half the power supply voltage. The driver would either pull it up or pull it down.”

Structure of DDR3 vs. DDR4 I/Os

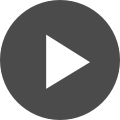

The operational differences in DDR3 vs. DDR4 are depicted in Figure 2.

John states, “On the left-hand side of Figure 2, there is a driver for DDR3, which is push-pull. The upper P-Channel transistor pulls that wire up towards the 1.5 volt supply. On the bottom is the N-Channel transistor which pulls the wire down towards the ground. At the other end of the wire are two resistors inside the memory chip that are the terminators for this line. These are typically two 100-ohm resistors, which is equivalent to 50-ohms to half of that voltage.

When you pull down on the driver, the current comes through the top resistor through the channel coax wire into the ground. For DDR3, the total amount of current from Vdd to GND is the same for a “1” or a “0”. It just changes direction through the wire. You need to have decoupling on the PCB to take care of the high-speed changes in the path, but the low frequency or DC current is constant and requires very little low-frequency decoupling.”

“In contrast for DDR4, on the right-hand side of Figure 2, the P-channel transistor is used only for equalization. It’s basically an N-channel driver pulling that wire down from 1.2 volts. The terminator is over on the right, and it goes to 1.2 volts.

What’s important about this is the current is on for a down level and off for an up level. This means you have large Vdd current changes and a much more difficult decoupling problem than for DDR3.”

John continues, “When all the wires switch at the same time from a high level to a low level, there can be a very large power supply current change and a much larger power dissipation when all the wires stay at the low level. One of the interesting features to help modulate this in DDR4 is a DBI signal (data bus inversion). This means something on the controller looks at the number of zeroes and ones in each byte of information going out. Normally, a 1 is high level and a 0 is low level. If there are more 0s than 1s, it simply inverts the data word and sends out a signal saying, ‘this is inverted.’ This means that you never have more than half of the wires pulled down, saving the peak load. In addition, it decreases the transient power as well by a factor of two. But this is a logic feature, not a board feature.”

In terms of board features, because the speeds are faster, there is less room for adjustments. For example, if you are running at 3.2 Gb/s that equates to 312 ps. John notes, “312 ps is the period. Out of that, you have to have some sort of allowance for jitter, for set up and hold on the receivers and for signal interference in the wires. This means that the lines have to be very tightly tuned. As a result, you probably can’t afford to have more than a few, maybe up to five, percent of that 312 ps allocated to line length variation. For DDR4, it’s recommended that lines be tightly tuned to the 5 ps range.”

Even with DDR3, the variation in lines inside the IC package is much bigger. John states, “When you are designing the paths, you have to go to the chip manufacturer to find out the line length of every line inside the IC package and the dielectric constant and speed of those lines. Then, the final number has to be subtracted off the variation that is on the board.”

Even with the preceding challenges, there are a number of features with DDR4 that are not in DDR3. Some of these features are very convenient for those product developers designing high-speed products, such as servers and routers. “One of the helpful features in DDR4 is testing features,” John continues. “I am used to dealing with processors that have lots of DIMMs. Recently, I had a design that had four memory DIMMs and four controllers, which equates to having many hundreds of wires coming out of a really big BGA. There is no JTAG test that tests every wire to see if it is soldered correctly. So, we made little test boards that we plugged into the DIMM socket. We put a piece of information out, such as an address wire on this jumper board, put it on the data wire, and brought it back. When we wiggled the address wire, we expected the data wire to move. This operation was similar to having JTAG. It was a tedious and labor-intense effort, but it was worth it to us to find any cold soldered joints or intermittent connections before we got the system all together and then had a problem. In DDR4, this kind of functionality is built directly into the chip.”

“In addition, there is a test mode that says ‘I am set up for test mode, and I am not going to worry about speed at all. I am for the mixture of DC 1s and 0s on the address lines.’ There is a pattern that you put on the address lines, and it comes out on these data bits at very low speeds. This makes for really nice testing.”

Fabrication of DDR Modules

In large memories, there are redundant rows and columns to account for any manufacturing defects. Essentially, one speck of dirt can wipe out a transistor and cause a row to stop working. Previously, there were little fuses on the surface of each memory chip. Part of production testing is to test every row, every column, and every bit arrangement and use the fuses to determine which columns and rows are good and which ones can be ignored.

John explains, “Now, with today’s memory chips, there are at least one or two rows available to the customer so that this can be done electrically in the field as bits wear out over time. Instead of having to scrap the memory and call a service agent, you can electronically say, ‘I got this bad row on this chip, so I am going to change it.’ If you think about the wafers being manufactured today, finding defects can be very overwhelming. You have wafers that are 13 inches in diameter, and a whole 100% scan is done on every wafer. They find defects on every layer of masking, and there are 40 layers of masking. This represents huge amounts of data. All of this is gathered with laser scanners and is fed back through fiber optics to servers.”

Different Styles of Design

There are differences between DDR3 and DDR4 memories, and there are differences in the styles used in designing memory chips.

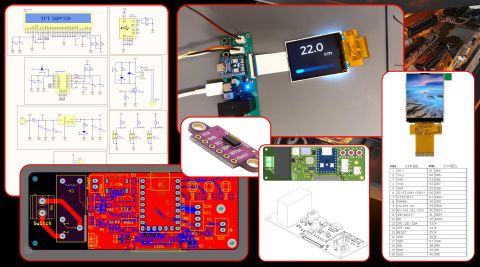

John Zasio, a Technology advisor to Speeding Edge and an expert on component design, explains, “There are people who design with DIMMs, and there are people who design from the perspective of ‘let’s put the chip on the PCB right next to the controller.’ But, when you put the chip on the PCB, you can have some nasty problems. Memory chips have pins that are pretty close together. On a big server board, you can have a PCB that is 1/10 of an inch thick and has 20 layers. In this configuration, it is very difficult to get the memory chips on the board and tune the line. For my customers who are dealing with big memories in really high-speed networking products, they use memory DIMMs. They don’t even try to put the chips on the board. You can put chips on the board if you are going to do a tiny little controller or if you are making an end product such as a cell phone where there’s a very thin board that’s manufactured with a build-up process instead of drilled holes.”

There are two styles of DIMMs. One style is buffered. This is referred to as registered. The other style is unbuffered. Unbuffered means the ADD/CTR wires coming out of the controller go into the DIMM, and then they go serially down the length of the DIMM, and they touch every chip on the DIMM to get the address and the control to those chips. Because there are so many chips on the lines, the signal integrity challenge becomes very formidable. The lines’ impedances are changed as are the manufacturing tolerances, so a lot of simulation has to be done to ensure that the product will work at speed.

John notes, “If you use a buffered DIMM, then the address and the control come onto the DIMM, and there is one load just like on data pins. There’s one load off the driver and one load on the DIMM. The resulting line is very clean. So, the chances of getting the memory right are an order of magnitude better if you use a registered DIMM versus an unbuffered one. There is another choice: DIMMs that are either 64 bit or 72 bit. The 72 bit is referred to as error correction and control (ECC). If you have these extra bits, there is enough information in them that the controller can detect a bad bit coming back. If you read something and one bit is wrong, the controller can detect which bit is wrong and corrects it by flipping it over. This is a very big deal in DRAMs because of the single-bit errors caused by cosmic particles or alpha particles coming from ‘the heavens’ that can go through 40 feet of lead. When this goes through the memory chip, it wipes out a memory bit. This is guaranteed to periodically happen on big servers. If you have the extra bits, you can easily correct the problem.”

“The cost of these extra ECC bits is nominal compared to having a processor that hangs and doesn’t operate as it should,” He continues, “With a registered DIMM the board design becomes much simpler because you have a single driver and a single load. You don’t have to worry about all of the manufacturing tolerances as the wires go across the DIMM. All of this costs a couple of dollars per DIMM. But, if you are dealing with a big machine that costs $100,000 or $1,000,000, an extra buck is not a big deal to make sure your design is going to work right the first time and all the time. Without the registers, you are dealing with the manufacturing tolerances of the wire lengths on the memory of the DIMM and the capacitor variations on each one of the chips, and you might not meet all the corner cases and you might have some errors periodically. That’s why I make the recommendation to all my customers to use registered DIMMs.”

Application Notes, Tools and Design Expertise

Just as we have noted in several sections of our book, relying on application notes from the chip vendor is often not the most viable path to follow. John explains, “I had a customer a couple of years ago that used an FPGA and an RLDRAM running at 2.4 Gb/s. The application note impedances on the address/control lines were wrong, but the chip vendor would not guarantee that the part would work unless the customer used the impedances which had been proven to work on the test board.

We ended up simulating the part and its operations with a tool from HyperLynx to determine if the part was going to work with the vendor’s poor transmission line impedances. The customer did not have the engineering experience and expertise to question the application notes, so they built the board for them, but it definitely was not the best way to build it. The challenge is that the chip vendors themselves don’t always understand memory interfaces, the transmission line requirements, the necessity of getting multiple chips in a row, and the impedance changes,” John adds. “So, if they don’t understand those factors, it’s likely that their application notes are going to be flawed.”

How do you Know When to Move to the Next Product Iteration?

As the speeds of network processors have increased, there is a corresponding need to have faster memory. But, as John cites, “It takes a certain number of nanoseconds for a processor on a server to say, ‘I need a piece of information’ from the memory and then to go to the cache, which is usually an on-chip block of memory of recently used information. If the information is not there, the instruction is to ‘go out to the main memory’ and find it. All this takes time to go out through the wires into DRAM and then get the information back into the controller chip.”

“Interestingly enough, the actual number of nanoseconds to access the memory has not increased substantially over the last ten years. What has happened is that the clock rate has changed. The amount of data that you can get back once you have initiated that request has doubled every few years. Second, the semiconductor transistors have gotten smaller, so you can put more memory into the memory chip. As shown in Table 1, the quantity of memory has gone up enormously. We’re going up to 16 Gb per memory chip. We have twice as much actual data on each chip for each generation at twice the speed. This is important for someone who is designing networking processors, routers, or servers. They need to keep up with the state-of-the-art, which means faster processors and faster memories.”

In terms of toolsets, simulators can assist in the design of memory, but they are complex and require a high level of engineering expertise to use them. John explains, “There are simulators that can help with the design process, but it is really, really difficult to get all of the necessary information into the programs. It’s like having very complicated spreadsheets where the calculations can be done, and an answer is given, but the answer is only as good as the numbers you put into the spreadsheet. You have to have a very good model of the transmission line, the effects of the glass weave and the via stubs, the pins on the package, and the trace lengths inside the package from the controller chip to the board. These tools can be very good, but setting up the models can be very tedious. What this type of design effort requires is someone who has a lot of experience of dealing with extreme detail. This is not a skill you acquire overnight.”

Summary

The good news is that there are products that operate at really high speeds and have lots of fast memory. Based on the evolution of technology thus far, memory capacities will only continue to increase. The challenge is understanding how DDR3 vs. DDR4 works and what is required at the board level to ensure these designs will operate correctly.

Contact us or download a free trial of Altium Designer® and Altium Concord Pro™. You’ll have access to the industry’s best MCAD/ECAD co-design, PCB layout, documentation, and data management features in a single program. Talk to an Altium expert today to learn more.