How IBM’s 5 nm Transistors Will Enable Internet of Things Deep Learning and Other Technologies

My great-grandmother was born at a time when normal people still used horses and carriages to get around. By the end of her life, she had seen jet airplanes, computers, and spacecraft. Technology in the last century accelerated rapidly, and this century promises more of the same. Moore’s Law has been one measure of the pace of advancement. While many experts have predicted its doom, Moore’s Law stands today. The most recent proof of electronic advancement is IBM’s breakthrough in transistor size. I

BM recently was able to fabricate 5 nm transistors that will greatly enhance computing speed and reduce power requirements. This discovery comes just in time, as processing and power are holding back several emerging industries. This new type of transistor will enable new technologies such as artificial intelligence (AI), the Internet of Things (IoT), and autonomous vehicles.

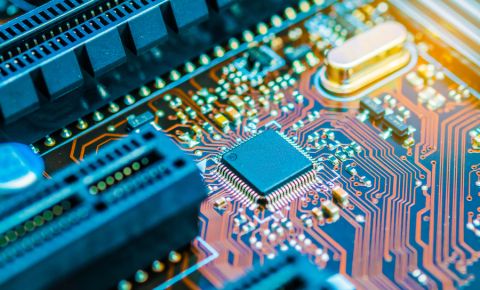

5 nm Transistors

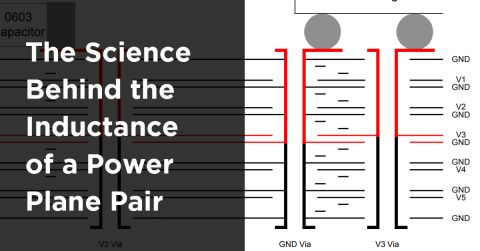

IBM published a blog last week detailing its recent discovery of a 5 nm transistor architecture. They achieved this feat by breaking from the current vertical FinFET arrangement and opting for a horizontal layering scheme. This new layout will raise the maximum number of transistors on a chip from 20 billion to 30 billion. 5 nm transistors will have major advantages over current technologies, especially in power consumption and processing speed.

All kinds of industries are craving lower-power chips. As the IoT explodes, embedded systems need chips that can perform advanced computations using a small battery. Experts predict that these new 5 nm chips will be able to perform today’s calculations with 75 percent power savings. That would mean mobile phones that could run for 2-3 days on a single charge.

If you’re not so concerned with saving power, you might be interested in increasing speed. Used to their maximum potential, IBM’s transistors will be able to perform calculations 40 percent faster than today’s processors. Things like machine learning and self-driving cars will become real possibilities with that kind of computing power.

So, you can either save 75 percent of your power, or process 40 percent faster. Let’s look at the applications these advantages will help the most.

The IoT will need low-power processors, like 5 nm chips.

Artificial Intelligence

In the 1950s Alan Turing hypothesized the “Turing Test” in which a computer is deemed sentient if a human interrogator cannot determine if it is a person or not. Many consider this test to be proof of true artificial intelligence. However, the purpose of AI isn’t just to trick humans but to allow machines to learn from and react to novel situations. Self-driving cars will need artificial intelligence in order to drive in the random environment of the open road. AI would also be useful for IoT devices, allowing them to interact intelligently with their environment.

The problem is that no computer has passed the Turing Test yet, primarily because they lack processing power. AI requires neural networks made up of powerful chips capable of processing huge amounts of information about their environments. Nvidia announced their plans last year to make a new IC focused on machine learning, boasting 15 billion transistors. IBM’s recent discovery will double that amount, making it a great option for deep-learning neural networks.

The Internet of Things

When I think of the IoT, I don’t think of devices that need the latest and greatest microprocessors. I think of everyday items that for some reason have Bluetooth functionality. However, it’s not IBM’s speed that the IoT needs, it’s the power savings.

IoT devices often have to operate for days or years on batteries. They might also use energy-gathering microelectromechanical systems (MEMS) or other schemes that harvest energy on location. Whether a gizmo is using a battery or an energy collection process, there’s usually not much juice available. Sensing and processing data take electricity. That data often also has to be transmitted over networks. 5 nm chips with 75% less power usage will let the IoT save more energy for sensing and data transmission.

Self-driving cars will need 5 nm’s 30 billion transistors to navigate the roads.

Autonomous Vehicles

Cars used to be almost entirely mechanical systems with only literal bells and whistles. Now experts are estimating that up to 70% of new cars on the road will be connected by 2020. However, connectivity is only one electronic aspect of next-generation cars. Along with communication, cars will also have to read large amounts of data from sensors. After the data is collected, it will be processed, and possibly sent to third parties. All of that is going to take more processing power than is currently available.

Current “autonomous” vehicles are not fully autonomous. They still need the driver to be alert and ready to take control in the event of a problem. Future advanced driver assistance systems (ADAS), like Tesla’s upgraded autopilot, will still require attention but will give the car more control. In order for cars to really drive themselves, they’ll need to incorporate a wide variety of sensors and implement machine learning algorithms. Intel estimates that these functions will require cars to process up to 1 GB of data per second. Tesla had to upgrade to a processor 40 times faster than its previous one in order to upgrade Autopilot. To get to the next level of autonomous navigation, they’ll need chips like IBM’s.

Scientific breakthroughs always have far-reaching implications, but it’s useful to look at their short-term uses as well. While 5 nm transistors are still several years from commercial adoption, once they arrive they will come with significant advantages. The low power and increased speed of 5 nm chips will help several industries mature and realize their full potential. Artificial intelligence, the IoT, and autonomous vehicles will be 3 of the top benefactors of this technology.

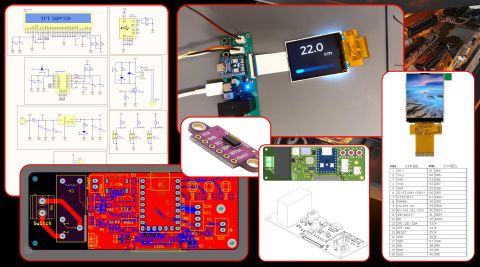

Whether processors are big or small, they all have to fit into PCBs. Engineers that want to produce boards that use this next-generation technology will need the world-class PCB design and layout features in Altium Designer®. Users can take advantage of a single integrated design platform with circuit design and PCB layout features for creating manufacturable circuit boards. When you’ve finished your design, and you want to release files to your manufacturer, the Altium 365™ platform makes it easy to collaborate and share your projects.

We have only scratched the surface of what’s possible with Altium Designer on Altium 365. Start your free trial of Altium Designer + Altium 365 today.