PAM4 Vs. NRZ Modulation Techniques

If there has been one constant about products designed for the internet/networking market spaces, it’s the data rate and frequency of operation at which the technology operates. In some cases, it can be argued that these two dynamics (data rate and frequency) are not necessarily proportional. Still, the former of these meets the criterion of being TNGT (the next greatest technology).

With software, the evolution of products is mostly straightforward (unless you happen to be the developer of the app used for tabulating caucus votes). With hardware, the dynamics are a bit more tricky, but evolution does occur. One thing that is always constant—be it software or hardware—is that the benchmark is the ability to deliver lots of data really fast.

Currently, two different signal modulation techniques are being examined for multi-gigabit Ethernet and fiber networking: traditional NRZ (non-return to zero), and PAM4 (pulse-amplitude modulation). The primary difference in PAM4 vs. NRZ modulation is in their available data rates, but other differences are not so obvious. Let’s look at the advantages and disadvantages below and their impact on the PCB design process.

Comparison of PAM4 vs. NRZ

In a nutshell, NRZ is a modulation technique with two voltage levels to represent logic 0 and logic 1. The voltage literally does not “return to zero”; logic 0 is a negative voltage, and logic 1 is a positive voltage. PAM4 uses four voltage levels to represent 4 combinations of 2 bits logic: 11, 10, 01, and 00. Lee Ritchey, Founder and President of Speeding Edge, explains, “[w]ith NRZ, you get two bits per clock cycle. With PAM4, you get four bits per clock cycle. For a given clock frequency, you double the bandwidth with PAM4. Stated another way, PAM4 is a way to pack more bits into the same amount of time on a serial channel.”

Based on the foregoing, the benefits of PAM4 seem relatively straight forward on the surface. They include:

- Doubling the density of the data

- Achieving higher bandwidth for the same data path

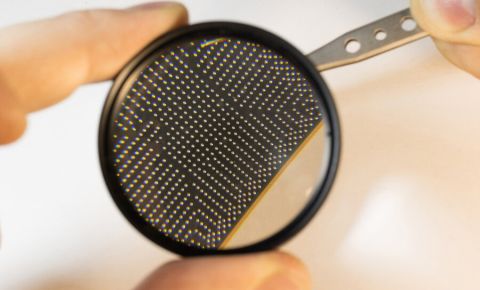

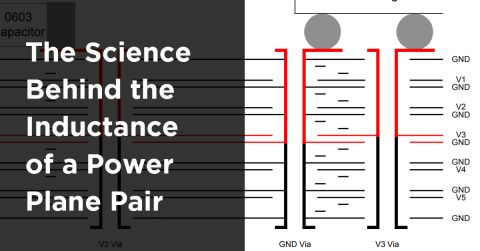

Ritchey notes, “For the same data rate, with NRZ, you have to have a wider bandwidth channel because you have to be running twice the clock frequency. That’s the real carrot of PAM. For a given channel, you can put twice the data through for the same clock frequency and channel bandwidth.” The image below shows an example of the frequency content in PAM4 vs. NRZ signals at 56 Gbps.

Let’s compare some fundamental metrics in these two modulation schemes:

Signal-to-noise Ratio (SNR)

By normalizing the x-axis by the bitrate for each signal, we see how the bandwidths and power spectral density of the two types of signals compare. This reveals one downside of PAM4 vs. NRZ: the PAM4 signal has 1/3 amplitude of an NRZ signal with the same clock rate, giving ~9.5 dB SNR decrease for PAM4.

Intersymbol Interference (ISI) and Bit Error Rate (BER)

Because there is the tighter spacing between voltage levels in PAM4 vs NRZ, a PAM4 signal has more ISI than an NRZ bitstream for the same clock rate. The BER value in the PAM4 channel can be larger due to random and deterministic noise-induced along with the link and Rx/Tx transceivers. Noise in the channel and transceiver causes the various voltage levels to fluctuate, while jitter at the Tx end and random signal distortion cause timing fluctuations. Overcoming ISI and reducing BER in a PAM4 channel requires equalization at the Rx end and pre-compensation at the Tx end (depending on the link length).

Power Consumption

Equalization at the Rx end and pre-compensation at the Tx end in a PAM4 link both consume extra power than would occur in an NRZ link for a given clock rate. This means PAM4 transceivers generate more heat at each end of the link.

In Theory Vs. In Practice

So, what’s the impetus for PAM4? Ritchey states, “People are mistaken when they consider PAM4 to be ‘new technology’. It’s actually been around for some time. Every time we think we have hit the limit in terms of how fast we can clock a data path, PAM4 has been put forth as the solution to get more bandwidth without having a higher quality channel.”

“In every instance where PAM4 has been brought in, it was because we couldn’t make the semiconductors go faster,” Ritchey continues. “But in each instance, we have managed to make the ICs go faster, so PAM4 as a widely-adopted modulation technique has never really made it before.” With 56 Gbps NRZ, the clock period is 20 picoseconds.

Ritchey explains, “You have to go up and back down in 20 picoseconds. If you want to go twice as fast, you would have to do it in 10 picoseconds. At the moment, I don’t think you can have that kind of clock period in an IC even if the bandwidth in the channel is there. Let’s face it; a 20 picosecond bit period is not very much. It’s difficult to imagine that you can achieve a 10 picosecond clock period in an IC. That’s why PAM4 is gaining so much attention.”

In some circles, PAM4 is being touted as the way to get to 400 Gbps. Ritchey points out, “We already have that without PAM4. 400 Gbps is eight channels of 56 Gbps of NRZ. PAM4 is being touted as the way to get to 800 Gbps.

A challenge in using PAM4 is that it’s not good over long distances because of the loss. And, loss increases with distance.

“This year at DesignCon, they demonstrated 112 Gbps PAM4 over three or four feet of twinax, which is low loss. But you can’t go very far in a printed circuit board because you can’t get that kind of loss number. A .0005 loss number is almost a vacuum. The best anybody is doing right now is .0015 in a board. I don’t see it getting much better than that. We can’t do much more with laminate materials. So, the solution is on /the component side,” Ritchey notes.

He continues, “However, I am still surprised at how good we have gotten with low loss laminate materials because, previously, glass was the limiting factor. Somebody has figured out how to formulate a glass you can spin without having polarized molecules. This is one place where the loss in laminates comes from. It’s also what’s happened with resin systems. Paraffin is about as good as you can get because it has no polarized molecules. But it can’t be used because it has no mechanical strength. We have been able to make more complex molecules that are not polarized. The molecules at DesignCon< were way more complicated than Teflon.”

Driving the Market

Given the foregoing, it may be difficult to determine the current impetus behind PAM4.

Ritchey explains, “It might happen that 56 Gbps PAM4 will be commercial. This means that you get 112 Gbps per channel. But, it can’t happen over very long distances. So, where are you going to use it? Server farms. That’s where all the large amounts of data are being transferred at very high rates.” The foregoing being said, it seems that the real driver behind the PAM4 movement is Gen5 (aka 5G). The whole idea of Gen5 is to replace all of the wireless nodes that would normally be in an environment such as a home or office. 5G would enable the use of a cell phone such that all of the local wireless nets would no longer be necessary.”

Ritchey clarifies, “With 5G, making the wireless connection is as simple as making a phone call. For instance, if you are in a location where you want access to an entity’s wireless network, you would no longer need a wireless router to do so.”

But, Ritchey continues, “In order to [get] 5G to work, you will have to end up putting lots of microcells around. That won’t happen unless it’s in dense population (urban) areas.”

Board Design Considerations

So, what does all the foregoing mean to the PCB designer? In a nutshell, It doesn’t change their job at all. With PAM4, you still need the same control over fiber weave effects and low-roughness copper finish, and you will use the same low-loss laminates used for NRZ. In addition, transmission lines will look the same, although you need to account for twice the bandwidth over the same wire in PAM4. As noted earlier, the downside is that the SNR is lower than for NRZ because there is less amplitude to work with. However, none of this impacts the board designer; the need for pre-compensation and equalization pushes the chip designer’s responsibility for signal recovery and noise reduction.

Ritchey points out, “The more complex and difficult hardware required in PAM4 refers to the silicon, not the board. But, there’s nothing new about that. We have been making silicon more complex for years.” “People think they have run out of gas with the silicon”, notes Ritchey. “That’s what’s given rise to PAM4. The question is, how do you get into and out of a device with a rise time of 5 picoseconds (which is the rise time needed for 112 Gbps PAM4)? I am not about to say that this can’t be achieved with ICs because I have already been proven wrong a whole bunch of times already.”

He continues, “Making a bit period that is only 10 picoseconds is a tall order. I’m sure that the component people are trying to do this, but it means that the transistors have to get even smaller.” Ritchey concludes, “The main proponents for PAM4 at DesignCon were companies selling scopes and other test equipment. Connector vendors were showing 800 Gbps PAM4, but there is not much magic in that. It’s the same connectors being used for 400 Gbps. The difference is not with the connectors, it’s the wires in between.”

Summary

As we attempt to go ever faster and pack more bandwidth into Internet/networking devices, several things have to happen in hardware. Achieving bit periods of 10 picoseconds for 112 Gbps NRZ may not be possible by changing the silicon. That’s what’s given rise to the current enthusiasm of using PAM4 vs. NRZ as a modulation technique.

Would you like to find out more about how Altium can help you with your next PCB design? Talk to an expert at Altium or continue exploring how to adopt signal integrity in your high-speed design process.

References

1. Intel “AN 835: PAM4 Signaling Fundamentals,” March 12, 2019.