Gigabit Ethernet 101: Basics to Implementation

During the system-level planning phase of any major hardware project, at least one Ethernet communication link is often included as a standard option, and it is this Ethernet interface on the circuit board that we are going to discuss in depth. In my Altium community, the question of how to implement Ethernet comes up every few months. It is often met with some generic answers as to impedance, but without anyone having a fantastic resource they can link to which covers everything from the ground up. This guide is what you are looking for if you’re ready to add Ethernet, especially gigabit Ethernet, to your electronic circuit design and need to get up to speed on Ethernet.

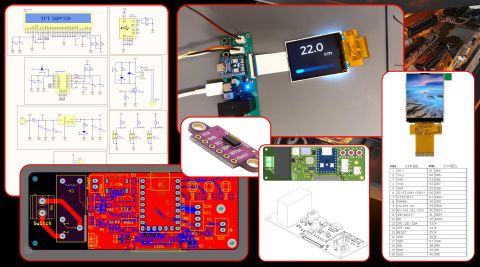

Before we dive in, this isn’t a project article - we won’t be building out a full solution in this project. However, I know everyone loves getting a schematic to look at rather than just reading pages of technical information, so I have added an example schematic on GitHub with an implementation of the Microchip KSZ9131RNX gigabit Ethernet transceiver PHY. We’ll get into what a PHY is later, however, I know it can make it easier for some readers to understand if they have a schematic to look at and apply the article to. There are screenshots of these schematics in this article. However, they are much easier to view in Altium than as images in the blog.

The Ethernet protocol was standardized in the 1980s and rapidly evolved from speeds of 10 M to 10 G+ bit/s. With today’s technology, Fast Ethernet (100BASE-TX) and Gigabit Ethernet (1000BASE-T) are both reasonably standard if copper circuit wire (twisted-pair) is used as the physical transmission medium. On the other hand, if fiber-optic cables are preferred, then a communication bandwidth of more than 10 Gbit/s may be achieved. It should be noted that these transmission rates are theoretical maximum figures. There will always be bottlenecks that limit the practical throughput, such as the controller and/or processor speed, as well as adverse impacts caused by imprecise PCB routing (including crosstalk, impedance mismatch, and maximum trace length). We’ll be getting into the PCB layout and routing considerations towards the end of the article, once we understand how gigabit Ethernet works and its required circuit components.

You may already have some idea about implementing gigabit Ethernet, perhaps you have even succeeded in implementing a working gigabit Ethernet interface, or this may be the first time that you have dived into high-speed digital interface design. This article is intended as a guide for designers, from the theoretical basics to the practical aspects of schematic and layout design. Even if you are an expert in digital interfaces, this article may be useful as a checklist or a reminder of the theory. You should be aware that to aid the readability of this article, some blocks or components will not be described in some sections, but these gaps will be filled in some of the following sections.

Gigabit Ethernet Basics

Before jumping straight into the hardware design, it may be helpful to have a brief insight into what kinds of data are traveling from the real world to the controller from the perspective of the network. A generic 7-layer OSI Model is universally used to designate the frame structure of all communication protocols and Ethernet, as defined by the IEEE802.3 standard, combines some of the OSI Model layers into just four layers, as can be seen in Figure 1.

The hardware designers’ areas of interest are the “Physical Layer” (Ethernet PHY) and “Data Link Layer,” while the other layers are primarily of interest to firmware developers, network stack libraries, and application developers, as well as cyber-security experts. By definition, Ethernet data carried on twisted-pair copper circuit Ethernet cable impedance is part of the physical layer until it reaches a device. In the data link layer, the data is decomposed into a format that can be understood by a network stack embedded in the controller. In simple terms, the physical layer is analogous to roads and trucks that carry the mail. In contrast, the data link layer corresponds to the envelope that has the address information needed to distinguish each item of mail from another. We will go into a more detailed explanation of how these network layers correspond with the equivalent IC-level information further into the article.

Why Choose Gigabit Ethernet?

Once the history of the Ethernet protocol evolution is examined, the significant speed improvements that come with each new generation stand out. Looking at circuit board hardware speed and bandwidth capabilities, the clear choice of generation to implement into a modern design is gigabit Ethernet. When it comes to different mediums, let us say you choose WiFi to avoid the need for Ethernet cables, there are some advantages and disadvantages when compared with Ethernet, as can be seen in the following examples.

- Speed: The maximum theoretical speed of WiFi operating to the IEEE 802.11g standard is 54 Mbps, which is not comparable with 100 Mbps Ethernet or gigabit Ethernet speeds. However, WiFi operating to the IEEE 802.11ac standard offers theoretical speeds of up to 3.2 Gbps, which is three times faster than gigabit Ethernet. It should be noted that the WiFi adapters and access points must all be compatible with 802.11ac for communications to achieve that kind of transmission speed. The theoretical speed of a WiFi link is often not possible in the real world as we seldom have a perfect line of sight between devices.

- Reliability: Wired connections may be routed as a kind of point-to-point network, and unless there are cable breaks or socket faults present, no interruptions to the network traffic are likely. This makes wired network operation highly consistent in terms of speed and latency. On the other hand, WiFi is susceptible to interference from other wireless devices as well as signal degradation due to atmospheric conditions and the effects of obstructions such as building walls. A simple change in the humidity can greatly impact the speed as the wireless signal is attenuated by atmospheric moisture. Theoretical and practical speed differences are also affected by reliability, which is far more perceivable when using WiFi.

- Security: WiFi transmits its traffic through the air, meaning that a receiver within range may easily capture the network activities unless the traffic is strongly password-protected/encrypted with a known secure algorithm. Your traffic may be safer while using wired connections where undetected interception is more challenging.

- Ease of Usage: If you do not like being limited to using cables, or you are operating in a location where laying cables is a problem, then choosing WiFi can make network connectivity more freely available.

Except when designing IoT devices, a hardware designer will often use an Ethernet interface to communicate with other systems, particularly for the transfer of bulky monitoring data and files. The reliability and speed of Ethernet are difficult to match, and this reliability and speed simplify engineering decisions and development of the circuit board hardware and firmware. Using a wired connection also offers another advantage: certification costs can be much lower if there is no radio transmission from the device as the device will be certified as an unintentional radiator.

What about using a USB interface instead of an Ethernet connection you might be thinking? They both use wired connections and with the recent evolution of the USB technology, USB 3. x standard interfaces have speed ratings that are similar to or higher than gigabit Ethernet (USB 3.1: ~10 Gbps). Should we replace all the Ethernet equipment with USB 3. x then? Before making your choice, think about if you are happy to settle for the following:

- Shorter cable lengths (a couple of meters instead of ~100 meters for Ethernet)

- Point-to-point connection instead of multi-point connection

- USB doesn’t offer typical networking, so pushing data to a remote web/database/file server is a challenge.

If you can live with those restrictions, then why not give USB3.x a try instead of Ethernet? Note that these restrictions are not intended to denigrate the USB3.x technology; whether you choose USB or Ethernet is determined by what you need for your specific application requirements.

For Ethernet, a game-changer is the use of an optical communication link instead of copper cable, an option that expands nearly all speed, latency, and cable length limits. However, fiber gigabit Ethernet is a subject for another time and will not be covered in this article.

Ethernet is a very convenient technology, allowing direct access to standard networking protocols and systems. If the network your device is attached to allows access to the internet, pushing data to remote servers such as cloud providers is a relatively trivial implementation when it comes to software/firmware development. Ethernet allows you to leverage existing infrastructure. WiFi offers a lot of conveniences but comes with risks and penalties which may or may not be acceptable to your application. USB is a prolific standard available on many devices. However, your device needs to be in close proximity to a host or client device which will typically need custom software installed on that device to provide communication with the product you are developing. Ethernet is not always the right answer to the problem, but it’s often a good answer.

Diving into the RJ-45 Connector

Since time immemorial, RJ-45-type sockets and plugs with twisted-pair copper cables have been used for Ethernet interfaces. The most common cable structure is the “Unshielded Twisted Pair (UTP),” which is categorized according to the maximum carrier frequency from Category 1 (Cat1) to Category 8 (Cat8). The carrier frequency determines the transmission speed, and to get the correct speeds, cable rated as Cat5 or higher should always be used for your gigabit Ethernet.

Tip: Pay attention when selecting an RJ-45 socket for your PCB, as some sockets have a low-profile option, which will require a board cut-out under the connector. Also, note that some RJ-45 jacks include the required Ethernet magnetics termination circuit (known as Bob Smith termination) integrated into the connector (sometimes called MagJack connectors).

As can be seen in Figure 2, UTP cables have four twisted pairs where each pair is assigned one positive and one negative signal. While 10/100 Mbps Ethernet only uses two pairs, Gigabit Ethernet uses all four pairs for full-duplex communications and is different from Fast Ethernet as all four pairs used by the Gigabit Ethernet are bi-directional. At this point, two questions are likely to have sprung to mind: Why do they use twisted pairs, and why is there one positive and one negative signal for each pair?

The short answer is that both of these features are used to reduce the effects of electromagnetic radiation and interference. Parallel cables in a bundle (not twisted) may easily inject noise into each other, as the cable acts as a current-carrying inductor and creates a magnetic field. A differential transmission technique is an excellent starting point in preventing this magnetic field effect since this method uses two cables, one for the original signal and one for an inverted copy of the signal that each induces an equal and opposite magnetic field that cancels the other out.

Although differential receivers are resistant to common-mode noise by design, if the positive and negative signal cables are not equally distanced from the noise source, the common-mode noise could be converted into a differential mode noise. This problem is solved by twisting the positive and negative signal pairs together. This makes sure they are close to each other within the entire length of the cable. A variation of this technique, differential pair routing, is a widespread technique used in PCB layout for critical signals.

Another problem seen in high-speed communications is signal reflection. If there are any impedance mismatches along the signal path, the maximum power will not be transferred beyond that point, and some of the signal energy will be reflected back to the source. If the impedance of longer cables and/or PCB traces is not well-matched, the signal quality can be degraded to a point where it results in a communications failure.

In summary, a UTP cable has four balanced twisted pairs that have 100 ohms characteristic impedance to reduce reflection, and they are twisted at different turn ratios to reduce crosstalk between pairs. The industry is doing its best with cable manufacturing, and this article will guide you through how to get the best PCB layout to avoid any signal noise or loss side effects.

Interpreting Ethernet Signals in the PCB

Even when we are talking about high-speed digital interfaces, it would not be incorrect to say the real world is an “analog” environment. All information traveling along a cable must be suitably digitized according to the required controller protocol, regardless of its architecture. Remembering the OSI Model and the layers for the Ethernet protocol, the first is the “Physical Layer” (PHY), which starts from the cable and continues until a modulated real-world signal is received/transmitted by the PHY IC device. The PHY IC is the transceiver of the Ethernet interface that handles encoding/decoding operations according to the protocol and includes the “Medium-Dependent Interface (MDI)” for the connected transmission medium (i.e., the UTP cable in the case of Gigabit Ethernet).

The second layer is the “Data Link Layer,” which is implemented in the Media Access Controller (MAC) which is the intermediate controller between the PHY and the microprocessor that includes the network stack in its firmware. After the PHY has completed its job with the signal bits, it directly sends them through the “Medium-Independent Interface (MII)” to the MAC controller, which creates and validates the frame structure according to the defined protocol. The PHY uses the MDI for the RJ-45 connection. The MII is used for the interface between PHY and MAC.

The hardware designer usually has three options when implementing a Gigabit Ethernet interface into their system:

- RJ-45

Discrete PHY IC

Discrete PHY IC  Discrete MAC IC

Discrete MAC IC  MCU/MPU/FPGA

MCU/MPU/FPGA - RJ-45

Discrete PHY IC

Discrete PHY IC  MCU/MPU/FPGA with integrated MAC

MCU/MPU/FPGA with integrated MAC - RJ-45

Ethernet Controller/Bridge (PHY and MAC)

Ethernet Controller/Bridge (PHY and MAC)  MCU/MPU/FPGA

MCU/MPU/FPGA

Since the data throughput for requirements for Gigabit (1/10+Gbps), Ethernet interfaces are so high, a high-speed bus such as PCI, PCIe, USB3.x or 16-/32-bit parallel bus is required for the processing units that do not have an integrated MAC. Most high-end microprocessors and System-on-Chips (SoC) (for example, NXP i.MX6 and i.MX8, Xilinx Zynq-7000 SoC, TI Sitara) have an integrated gigabit MAC controller to handle high-bandwidth data transfer into the network stack internally. In contrast, some mid-end MCUs (for example, ST STM32F4 and many other ARM Cortex series, or Microchip PIC32M) have a 10/100 Mbps embedded Ethernet MAC. Although we have mentioned a third option, it isn't easy to find a Gigabit PHY and MAC combination in a single package. So, we have just included this option for completeness; for example, Microchip LAN7430 and LAN7850 are available in the market. Also, the Intel 825xx series is another option, but generic suppliers do not stock these, and availability is subject to Minimum Order Quantities (MOQ) and Non-Disclosure Agreements (NDA). On the other hand, for the 10/100 Mbps option, you can find many of these devices in hobby-level electronic boards such as the ENJ2860, ENC424J600, and W5100/W5500 with interfacing for a Serial Peripheral Interface (SPI) bus.

Generally speaking, the second option we listed is always preferred if the processing unit has a sufficient MAC interface (MII) for the required gigabit interface quantity for the design. Even with the limited number of MAC interfaces on the processor side, the use of IC-level Ethernet switches may solve any problems if all the Ethernet interfaces operate at the same network confidentiality level. Use by the defense industry may require physical separation of the interfaces for security reasons. Based on the information we have covered so far, we have chosen a design example that will be based on using a discrete PHY and integrated MAC.

Before going any further, let’s look at which suppliers generally stock popular discrete Gigabit PHY and MAC ICs. Note that the specific selection criteria and consideration of their features will be covered in the following sections. Suitable devices are KSZ9031, KSZ9131, VSC8211, and VSC8501 (all from Microchip/Microsemi), ADIN1300 (Analog Devices), MAX3956 (Maxim), and DP83867 (Texas), which are all commonly stocked Gigabit PHY chips. Broadcom is another gigabit PHY manufacturer (BCM546x and BCM548x series), but they are generally non-stocked with a high MOQ and/or they have a long lead time.

The LAN7431 and LAN7801 (Microchip), BCM5727, and BCM5720 (Broadcom) are all Gigabit MAC controller ICs that can be found available in the market.

Tip: Pay attention to the environmental requirements of the integrated circuits when you make your selection. Double-check your needs in terms of operating temperature, ROHS compliance, and moisture sensitivity in addition to the electrical requirements such as Ethernet voltage level, device footprint, etc.

Magnetics

Up to this point, we have said that Ethernet data travels via the UTP circuit cable, through the RJ-45 connector, and is then transferred via MDI to PHY. However, the IEEE 802.3 Ethernet standard states that the PHY must be galvanically isolated from the transmission medium. There are two fundamental reasons for this isolation requirement. The first is due to the possible ground offset between devices that are located far away from each other. The second is to protect all devices from line failures such as a short to a high-voltage rail, a surge-spike, or an ESD strike. Although the Ethernet standard does not strictly define the isolation method, using either a transformer or an optoisolator is usually the preferred option in the first instance. However, transformer isolation has some great advantages when used in Ethernet applications, and it is widely used in circuit designs. The benefits of using a 1:1 isolation transformer are:

- There is no need for a voltage supply on the isolated side since the signal is directly transferred over the transformer.

- Ethernet signals (even 10 Mbps) are too fast for most optoisolators, and transformers are cheaper and easier to obtain.

- By their very nature, transformers have a very high Common-Mode Rejection Ratio (CMRR), which makes them a perfect fit for differential communications. Any common-mode voltage applied to both terminals of the transformer is rejected, and only a differential voltage between terminals is transferred across to the isolated side.

- Since MDI pairs are impedance-controlled balanced differential pairs (Z0 = 100 Ω), they must be strictly matched to the twisted pair cable characteristic impedance. Suppose the cable pairs have a different impedance from the MDI pairs. In that case, the transformer offers the ideal point to overcome any impedance mismatch allowing the signal to be transferred without any reflection due to matched impedances. Also, as we will discuss in the following sections, some PHY transceiver devices may be based on unbalanced MDI pairs, and transformers are ideal for use as a BALUN (BALanced-to-UNbalanced) convertor.

- High isolation voltage protection (the standard requires immunity to 1500 VAC at 50/60 Hz for 60 seconds between pairs or from one pair to chassis ground) is easily obtained when using magnetic isolation, which protects the PHY side from the effects of ESD strikes.

A couple of disadvantages of using a transformer are that it blocks the DC component and is not very efficient at low frequencies. However, these can be easily solved by the modulation scheme and selecting an appropriate transformer that meets the chosen Ethernet protocol standard definitions.

After deciding to proceed with using the transformer option, and after a brief supplier search, the first question you are most likely to have is whether you should use discrete magnetics or a connector with integrated Ethernet magnetics. Unfortunately, there is no perfect answer, and the trade-off between these options needs to be analyzed in detail by the designer. A comparison of the two options is summarized in Table 1 below (The bold text denotes the winner).

Table 1. The trade-off between Discrete and Integrated Magnetics

| Discrete Magnetics | Integrated Magnetics w/RJ-45 | |

|---|---|---|

| Cost | More expensive due to using more components. | Cheaper as the BOM item count is lower. |

| Assembly | More complex with more soldered parts. | Assembly is just the connector, and it is then ready to use. |

| Layout | A more complex, poor layout may negate the electrical advantages of using discrete magnetics. | More straightforward and with less risk of the layout being wrong. |

| Maintenance | Failed parts may be troubleshot and replaced separately. | In the event of a failure, the entire connector needs to be replaced, so it may be more expensive to maintain in the long term than the discrete option. |

| Crosstalk/EMC and ESD | With the help of a good layout, the possibility of crosstalk between pairs is decreased to near zero. As magnetics create an isolated domain, ESD strikes are handled in a limited area of the PCB before reaching the PHY side. | Although the metal shielding of the connecter provides some EMC advantages, it is more susceptible to crosstalk between pairs, and a voltage spike from an ESD strike may be coupled to PHY MDI pairs more easily since the transformer is located within a small area. |

| PHY compatibility | Compatible with all PHYs due to all connections being separately routed to pins. | Some center-tap connections may be ganged together to decrease the pin count, and then routed to a single pin which can cause performance degradation. |

In light of the provided information, it’s up to the designer to select the best fit for their particular application. Just to note that based on our experience, if there is any kind of reliability and/or safety requirement (such as MTBF, FME(C)A requirements in the automotive and defense industries), then the use of discrete magnetics is usually a better choice. For mass-produced commercial projects and hobby-level electronics, integrated magnetics are a perfect fit as they reduce costs and simplify the design process. Here, the discrete magnetics option will be selected for our design example. The internal structure, selection criteria, and connection diagrams for the discrete magnetics will be described below.

Firstly, the selected magnetics should have a transformer block for each of the four pairs that are used in Gigabit Ethernet applications. Also, even though it is not mandatory, having a common-mode choke (CMC) to increase the common-mode noise immunity is always a good option. Although differential receivers on their own are good at rejecting common-mode (CM) noise, with the help of CMC, the signal-to-noise ratio and, as a result, the bit error rate will be improved on the receiver side. In terms of the transmitter pairs, the CMC decreases EM emissions caused by CM noise coupled onto the PHY MDI pairs. Another optional component in the magnetics is an auto-transformer that creates a high-impedance path for the differential Ethernet signals while creating a low-impedance path for the CM signals.

To summarize, as shown in Figure 5 above, a 1:1 isolation transformer and common-mode choke are always included in the Ethernet magnetics available in the market. The easy part of the selection process is to check that the winding tolerance is less than ±5% and verify that the isolation voltage, working frequency, CMRR, and crosstalk ratio are all per the IEEE standard for the Gigabit Ethernet pinout. Selecting magnetics with an auto-transformer is another trade-off for the designer to consider, ensuring compliance with the system-level EMI/EMC requirements plus any requirements derived by authorities such as FCC are both vital factors. Selecting the 12-winding magnetics option will increase costs while decreasing the risk of failure on EMC tests. Alternatively, the 8-winding magnetics option is cheaper and allows a good layout design, but the EMC test failure risk may need to be mitigated. It is good practice to select the 12-winding magnetics option if the Ethernet interface is part of a digital system that generates a lot of noise. If an 8-winding is desired in such circumstances, consider connecting the CMC side to the cable side for better EMI performance (note that connecting these the other way around will also work). Where a 12-winding is selected, the auto-transformer should be connected to the cable side for the correct operation. Pulse Electronics, Bel Fuse, Halo, Bourns, and TDK are all generally stocked magnetics manufacturers. To avoid any confusion when reading the datasheet, typically, the pin labels starting with “Mx” are intended to be connected to the “media” (cable) side, and pin labels beginning with “Td” are connected to the PHY side.

Dealing with issues related to EMC can seem like “Black Magic,” and so before performing tests, it is hard to be sure if levels will be within limits. Therefore, a designer needs to use every noise reduction technique available and have some alternative enhancement options ready to mitigate the risk to ensure that the levels are low enough in the final design. Independent from the topology in Ethernet magnetics, both the 1:1 isolation transformer and the auto-transformer have their center taps routed to pins to provide additional termination, filtering, and biasing options.

According to the patent of Robert (Bob) W. Smith, the UTP cable pair-to-pair relationships form transmission lines relative to each other. If the transmission line is not terminated correctly, then there is a possibility of a reflection that will degrade the signal quality. To prevent reflections, it is recommended that each center tap on the cable side (including 8- or 12-winding components) be separately terminated using a 75-ohm resistor to the magnetics chassis ground. It is also good practice to add one high-voltage capacitor between the termination resistor and the chassis to form an additional filter for common-mode noise reduction, similar to split termination topology. Note that each center tap should have an individual termination resistor, while just one capacitor is adequate for all four chassis connections. (See Figures 6 and 7 below)

Tip: It is a good practice to use surge-resistant MELF 75 ohms termination resistors to increase ESD immunity on the magnetics cable side, though MELF resistors are highly frustrating for assemblers.

When it comes to the center tap on the PHY side, this should generally be connected to the signal ground using a capacitor for additional filtering purposes. Like the Bob-Smith termination resistors, each center taps for the pairs should have its capacitors to prevent any stray current flow between each pair. This center tap may also be used to supply the required common-mode bias voltage needed by the PHY topology and/or pull the line up/down according to different line-driver configurations on the PHY side. Please check the PHY datasheet carefully to identify which biasing and line-driver configurations are applicable. These will be discussed further in the next section.

Gigabit PHY

It is said that the PHY is the critical point where the transfer of Ethernet data from the “Digital” world into the “Analog” real world occurs, and vice-versa. As can be seen in Figure-8 below, the PHY is the last active component before the signal goes to the connector (and magnetics) in all three configuration options.

During the PHY selection process, just two fundamental questions will be the critical determinants for device selection since most of the standard-defined properties are automatically included in any PHY IC. The first question is the determination of the interface for the connection to the data link layer devices (MAC), and the second question is the determination of the supported media options for the cable side connection. As previously stated, the PHY transceiver has a “Media Dependent Interface” (MDI) for real-world communications and a “Media Independent Interface” (MII) for MAC communications. The MII naming convention may be thought of as a generic brand name that is also used for products (i.e., referring to all-black biscuits with vanilla creme as Oreo). There are five alternatives available, which are MII, RMII, GMII, RGMII, and SGMII (in short, let us refer to these all as “xMII”). Each of these will be detailed further in the next section. The PHY needs to have a suitable interface with the selected MAC. Similarly, the system-level requirement of the transmission medium, such as copper cable and fiber optics, needs to be considered. If a copper UTP cable is required to be used, the PHY should have a suitable MDI interface for the magnetics and the RJ-45 connector.

To demonstrate this point, you should check the product page of the selected PHY in the design example, which is the KSZ9131. There are two options available, the KSZ9131MNX and KSZ9131RNX. While the former option supports GMII/MII, the latter only supports RGMII. If the selected MAC only has the RGMII interface, then the KSZ9131MNX would be the wrong selection. There is no need to mention the MDI side as this is relatively clearer when it comes to selecting the correct PHY, with the choice between fiber and copper cable interfaces.

Reading and understanding any gigabit PHY datasheet may not appear that easy at first glance since there will be a lot of standard-defined properties listed in the features section. Unless you need to create a special implementation of a gigabit Ethernet interface, most of these features are just “nice to have” options that may make life a little easier. We will try to describe some of these briefly and if you feel some extra information is needed for your specific application, just google the appropriate keyword:

- Auto-Negotiation: This is best defined as the mutual agreement by network devices sharing a wired connection on what speed, duplex, and controls they should all use to manage their use of the link. This feature is very useful for backward and forward compatibility, and it is a mandatory requirement for any gigabit Ethernet.

- Auto MDIX (Crossover): For 10/100BASE operation, TX pairs need to be directed to RX pairs, and vice-versa. The primitive solution was to change the pair order in the cable connector. This then evolved to a change to the device connector order (MDI for straight order used with a PC and MDIX for reverse order used with a switch/hub). Lastly, HP engineers patented the Auto-MDIX protocol that enables the PHY to decide the transmitting/receiving pairs and establish the proper communication link. The main reason for using this function is backward compatibility and to remove the need to use crossover UTP cables since the gigabit Ethernet pairs are bi-directional, and use different algorithms in the PHY such as echo cancellation.

- Energy Efficient Ethernet (EEE): As can be seen by the name, if the PHY has the EEE feature, then if no data needs to be sent for a specific time, the transmitter is automatically put into Low-Power-Idle mode, letting all connected devices know it is in this state by sending LPI packages. As the receiver is always active, there is no risk of interrupting communications, and typically, this can lead to power savings of more than 50%.

- IEEE 1588 Precision Time Protocol (PTP): This feature is generally needed by real-time applications ranging from factory automation to telecommunications. Integrated 1588 features can generate strictly synced, low-jitter clock Ethernet signals, stamp the packets, and trigger events on a GPIO.

- Synchronized Ethernet (SyncE): For high-bandwidth time-critical communications such as real-time voice and video transfer, the data buffering at each node needs to be minimized, and as a result, all nodes must be tightly synchronized to a shared clock signal. SyncE is created to carry clock information between nodes using the PHY devices. Each PHY recovers the clock signal and uses either an internal or external PLL to remove any jitter before using the signal to synchronize operations.

The Ethernet PHY includes coding and modulation blocks as per the IEEE standard to overcome any physical limitations that allow Cat5 UTP cable to be efficient and be certified for frequencies of up to 125 MHz. If the PHY sends every bit in one clock cycle (like 10BASE), then a cable that supports a 1 GHz rate would be required. Instead of sending each bit in one clock cycle, the 100/1000BASE transmits one “Baud” per cycle with encoding applied. The 100BASE encodes each 8-bit group into a 10-bit packet (4B/5B or 8B/10B scheme) to increase reliability, meaning that it needs to send at a rate of 125 MBaud that requires a clock speed of 125 MHz.

Gigabit Ethernet uses PAM-5 modulation that uses five Ethernet voltage levels and encodes two bits per clock cycle using four different voltage levels in each pair; the fifth voltage level is used for error correction. The main difference between 100BASE and 1000BASE is that the gigabit Ethernet uses all four pairs bi-directionally and at the same time. By using basic math, we can see that 1000 Mbps / 4 = 250 Mbps per pair, and encoding two bits in each cycle results in a 125 MHz clock rate. So, using the same Baud rate and clock frequency as the Fast Ethernet, the Gigabit Ethernet uses all available resources more efficiently and increases the link speed, all the while keeping within the certified limits of the relatively cheap Cat5 cable rather than needing to use more expensive higher category cables.

The modulation/encoding used is very common across the communication world, and all transceivers should have no problem successfully modulating and demodulating (mod/demod) the Ethernet signals. As the Gigabit Ethernet PHY is a backward-compatible transceiver device, we can see why it needs both a 10 MHz (10BASE) and 125 MHz (100/1000BASE) clock source for the mod/demand processes. Also, additional clock references such as 2.5 MHz, 25 MHz, or 125 MHz may be required for PHY-to-MAC “xMII” communications depending on the selected interface type. Generally, a PHY will also have a 25 MHz or a 125 MHz clock output for synchronizing with other PHYs or as an input reference for the MAC device.

All Ethernet PHYs available on the market have an internal PLL Clock Synthesizer, so just need a reference crystal or oscillator, generally at 25 MHz. It is always a good idea to double-check the datasheet to see if it has a built-in crystal driver that makes it able to use crystal. Generally, the accuracy requirements are advised to be better than 50 ppm, and utilizing an oscillator may make the layout easier. Again, it is a trade-off for designers in terms of price, stability, and layout effort. You have to be careful to check the crystal load capacitance if you select this option.

The “strap” or “bootstrap” terminology used for Ethernet devices determines the hard-coded settings for parameters such as the device address, mode, xMII selection, clock-out enable, etc. before the device completes power-up. It is strongly recommended to check the datasheet carefully for the strap options since these are vendor-dependent and may be subject to change between each device. The crucial point here is to adjust the required reset time for the strap pins to settle at the desired voltage level, which is easily adjusted using an RC delay circuitry.

Another point related to PHY selection is to check whether it has internal termination resistors or not. Their presence is critical for signal integrity, both for the MDI and the MII sides. The MDI uses balanced differential pairs, so if the PHY does not have on-chip termination resistors, parallel split termination (preferred to filter common-mode noise) must be added to the board. Similarly, the xMII interface should have series termination resistors, either on-chip or onboard.

As briefly mentioned, while describing the use of the magnetics center tap, there are two types of line drivers available for gigabit Ethernet: Current Mode and Voltage Mode. The designer should check the PHY line driver for a magnetics center-tap and split termination center-tap connection. Since voltage-mode drivers have various advantages over current-mode, nowadays, this type of line driver is more prevalent among devices. However, the designer should still be aware of the current mode line driver requirements for different design aspects.

Tip: For further reading, check the Microsemi “ENT-AN0106 Application Note”.

Most of the Ethernet devices (PHY, MAC, and Switch) need a 1.2 V supply rail for the analog and digital cores as well as for PLL power. Other analog, digital, and IO supplies are usually selectable from 3.3 V, 2.5 V, and 1.8 V, and the datasheet must be carefully checked for the required power supply scheme. To allow single-supply operation, the device may have an integrated LDO controller (e.g., in the KSZ9131) that drives a FET to regulate the 3.3 V or 2.5 V supply down to the required 1.2 V supply. If the board already has a separate 1.2 V power supply, then this option may not be needed. As FET selection is strictly related to the controller, designers should follow the recommendations within the datasheet for FET specifications.

Although it will be detailed in the next section, it is worth mentioning that the PHY and MAC should have a management interface connection on top of the “xMII” connections to establish correct communications.

After selecting the correct PHY to fulfill the requirements and following the above recommendations, the schematic design is fairly standard regardless of the chosen device and follows these steps:

- Supply correct power to rails with bulk and local decoupling capacitors

- Connections to magnetics and connectors with LEDs

- Connections to MAC with xMII

- Connections to MAC for management interface (MIIM)

- Supply the correct clock input

- Check and arrange “strap” options

- Check and arrange termination and biasing options

A sample schematic design using the KSZ9131 PHY is provided in Figure 13 below. Some explanatory notes and device-specific pin connections are given inside the schematic. You can find the schematic files for this figure on GitHub, as it’s much easier to view in Altium Designer.

PHY to MAC Communication

Digitized and demodulated/decoded data is transferred into the MAC data link layer device via the “xMII” media-independent interface. Most of the MII variations (except for SGMII) are parallel interfaces and are similar to a parallel memory bus. Transmitted and received Ethernet signals must be synchronized using clock signals. It is vital to bear in mind that evolving technology not only increases bandwidth requirements but can also result in a lot of interfaces in use at the same time. This is why having at least one GPIO pin may be valuable to future-proof the overall design.

At the very beginning, a 10/100 Mbps Ethernet interface with an MII based on a 25 MHz clock had 16 pins defined. Then, with the appearance of Reduced-MII (RMII) mode, the clock frequency was doubled to 50 MHz, and the pin count was reduced to 7. Since the data throughput of the MII and the RMII is not suitable for gigabit Ethernet, we won’t go into detail in this article for these two device types, except for listing the pins in Figure 14 below.

The Gigabit-MII (GMII) supports maximum speeds of 1 Gbps using a 125 MHz clock rate, uses 25 pins, and is completely backward-compatible with the MII specification. Signal descriptions are given in Table 2 below.

Table 2. GMII Signal List

| Signal Name | Signal Description | Signal Direction | |

| TXD[7..0] | Data to be transmitted | MAC to PHY | Transmitter |

| GTXCLK | Clock signal for 1 Gbps(125 MHz) | MAC to PHY | |

| TXCLK | Clock signal for 10/100 Mbps (2.5/25 MHz) | MAC to PHY | |

| TXEN | Transmitter Enable | MAC to PHY | |

| TXER | Transmitter Error (to intentionally corrupt the package, if necessary) | MAC to PHY | |

| RXD[7..0] | Received Data | PHY to MAC | Receiver |

| RXCLK | Received clock signal (Recovered from received data) | PHY to MAC | |

| RXDV | Data valid signal | PHY to MAC | |

| RXER | Receive error | PHY to MAC | |

| COL | Collision detection for only half-duplex mode | PHY to MAC | |

| CS (CRS) | Carrier sense for only half-duplex mode | PHY to MAC |

The Reduced-GMII (RGMII) is almost the most popular gigabit PHY to the MAC interface as it reduces the signal count by half compared with the GMII and is similar to the MII/RMII. For gigabit communications, data is clocked on both the falling and rising edges of the 125 MHz clock, which results in a halving of the data signal count. If backward compatibility with 10/100 Mbps communication is needed, then only the rising edge is used for data clocking. In addition to the data signal reduction, the RGMII model time-multiplexes the TXEN signal with the TXER signal in TXCTL, and the RXDV with the RXER signal in RXCTL while eliminating the COL and CRS signals. A total of 12 signal pins are used for RGMII, and signal descriptions are given in Table 3 below.

Table 3. RGMII Signal List

| Signal Name | Signal Description | Signal Direction | |

| TXD[3..0] | Data to be transmitted | MAC to PHY | Transmitter |

| TXC | Transmit clock 2.5 MHz for 10 Mbps 25 MHz for 100 Mbps 125 MHz for 1 Gbps (Double Edge) |

MAC to PHY | |

| TXCTL | Multiplexing of TXEN and TXER On rising clock edge: TXEN On falling clock edge: (TXEN xor TXER) |

MAC to PHY | |

| RXD[3..0] | Received Data | PHY to MAC | Receiver |

| RXC | Receive clock 2.5 MHz for 10 Mbps 25 MHz for 100 Mbps 125 MHz for 1 Gbps (Double Edge) |

PHY to MAC | |

| RXCTL | Multiplexing of RXDV and RXER On rising clock edge: RXDV On falling clock edge: (RXDV xor RXER) |

PHY to MAC |

The TXC signal is supplied by the MAC, and the PHY supplies the RXC signal. Both of these are source-synchronized clock signals, and they use both the falling and rising edges of the clock, which makes timing more critical. The RGMII standard requires the addition of a clock delay between 1.5 ns and 2 ns for both the TXC and RXC signals to ensure valid data signals are processed during the falling and rising edges. Luckily, most of the PHY and MAC devices support RGMII-ID (RGMII-Internal Delay), and no further action is needed other than to enable this ID feature and adjust the delay time. However, the designer needs to be 100% sure that both the MAC and the PHY support this internal delay feature. If it is not supported by one or both devices, then the delay must be applied as part of the PCB layout by using correctly designed serpentines, as shown in Figure 15 below.

While looking at Figure 15, your attention may be drawn to one strange point: the TX signals on the MAC side are connected to the TX signals on the PHY side. This is due to the naming conventions; every transmitter and receiver is named with respect to the MAC side, which means that the signals on the PHY side that are labeled with TX and RX correspond to the PHY receiver and PHY transmitter, respectively. Always double-check the naming conventions before designing the Ethernet layout.

Single-ended parallel bus topologies need series termination on the driver side to match both the output driver impedance and the line characteristic impedance, to prevent reflections and EMI problems. The xMII signals need to be 50 ohms, single-ended, and the TX signals must be length matched with the TXC (TXCLK). Similarly, the RX signals must be length-matched with the RXC (RXCLK). Designers should check the PHY and MAC datasheet for the presence of internal termination resistors, and if they do not exist, they must be placed on board. The resistor value will be the difference between Z0 = 50 ohms and the line-driver output impedance. Generally, values between 20 ohms and 40 ohms will work, but some trial and error may be needed to get the best performance.

Serial GMII (SGMII) is quite a different concept compared to the other modes in that it is similar to a Serializer/Deserializer (SerDes), using one TX pair, one RX pair, and one reference clock pair. The clock frequency is 625 MHz DDR, which is relatively high. Parallel GMII data is encoded, using the 8B/10B format, into TX and RX pairs. SGMII reduces the pin count and increases the speed, but the downside is that the layout is more complicated than for the xMII methods. Moreover, most of the integrated gigabit MACs available on the market only have support for the xMII interfaces. If the design needs a 1 G+ Ethernet interface, then SGMII is the only option for the PHY to MAC connection.

Most SerDes high-speed interfaces require capacitive coupling to prevent receiver-transmitter common-mode voltage mismatches. It is recommended to have at least placeholders for 100 nF series capacitors close to the TX side of the SGMII pairs, along with parallel termination resistors according to the differential pair impedance (usually 100 ohms or 150 ohms).

In addition to the above-mentioned xMII interfaces’ pin count, two signals should be added for the MII management interface (MIIM or MDIO/MDC interface). This interface is similar to the I2C bus and is used by upper-level devices (such as the MAC) to acquire the PHY status and to program the PHY registers to tune changeable run-time parameters like clock settings and fix-up routines. The MDC signal is a 25 MHz clock supplied by the MAC, and MDIO is a bi-directional open-drain data signal, so the MDIO needs to be pulled up according to the shared PHY device count (generally needing a resistor between 1.5 k ohms and 10 kilohms). As well as defining the serial management interface (SMI) using the same pins, some manufacturers also internally use MDC/MDIO pins for bridging to the I2C or the SPI for ease of usage, especially in Ethernet switches.

Ethernet Switches

It is worth mentioning that you may not need to add multiple Ethernet PHY and MAC devices on your circuit board unless there are strict requirements for the physical separation of the interfaces. Multiport PHY and/or MAC switches are a popular way of increasing the Ethernet interface count using one device. Some switches only have PHY interfaces to the switch, and some others combine the PHY and MAC (xMII) interfaces together. There are a lot of alternatives; for example, the KSZ9897S is an option that combines a 5-port PHY, a 1-port RGMII/GMII/MII, and a 1-port SGMII together (See Figure 18).

It is evident that if you are not designing a pure Ethernet switch that directly routes all the PHY interfaces to the RJ-45 connector, there may be an option to connect another PHY to the switch PHY. The best practice is to use isolation transformers for all the PHY interfaces located on the board, similar to the RJ-45 connector operation. However, this method is expensive and uses up a lot of board space. There is the theoretical option for a PHY to PHY connection on the board, called Backplane Ethernet that does not require transformers. Instead, all the pairs are capacitively coupled using series 100 nF capacitors. Although it is not guaranteed to work over long distances, in theory, it works very well over relatively short distances. If you try this, do not forget to add biasing resistors after the AC coupling capacitors if, and only if, one of the PHY’s has a current-mode line driver (See Figure 19).

Layout Considerations for Ethernet

After reading hundreds of datasheet pages, you have a perfectly designed schematic that meets all the requirements and suggestions the manufacturers have suggested - however, all that effort can easily be ruined or have degraded performance due to fundamental Ethernet layout failures. For the design of a Gigabit Ethernet interface, there are impedance-controlled differential and single-ended signals to be considered, as well as some length matching and maximum length limitations. Most of the time, these requirements are automatically fulfilled by the sensible placement of the components unless the designer tries to override this approach. The problem is that if general Ethernet layout rules are not obeyed (such as not using solid reference planes for Ethernet impedance-controlled traces), it is a waste of effort to strictly match trace lengths or keep them below the maximum length limits. Therefore, we will briefly describe the generic high-speed layout rules before specific gigabit Ethernet layout requirements are discussed to provide a foundation for the more specific requirements.

Power Supply

High-speed switching digital ICs demand transient currents. These transient currents should be supplied using bypass/decoupling capacitors since the parasitic impedance of the PCB trace, between the supply pin and the power rail, will have an inductive component (dependent on trace width) that resists transient currents. The main rule is to place bypass capacitors as close as possible to all the supply pins, with at least one 10 nF and 100 nF capacitor for each pin.

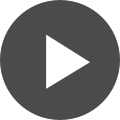

For multi-layer boards, there are separate power and ground planes, and so vias will inevitably be used in the path used to supply power. Since vias also have an inductive component, no via should be used between a bypass capacitor and its associated supply pin. This rule is illustrated in Figure 20 below.

Reference Plane

The basic rule for all electronics is that the current flowing in the Ethernet circuit always returns to its source. So, there should always be a return path for the signals, and this return path will form a loop antenna with the outbound signal path. If the loop area is kept small, then no EMI/EMC problems will be created, but if for any reason the loop area becomes larger, then the designer may find themselves with severe EMI/EMC issues. These EMI/EMC issues may severely degrade the performance of your device in ways you do not expect, and at the very least are likely to cause you to fail EMC testing when seeking certifications required to legally market/sell your product.

Based on both the theory and experimental evidence for high-speed signals, the current return path will follow the trace on the layer that is beneath it. In other words, it's a reference plane. Keeping a solid reference plane below any high-speed signal routing will minimize the loop area and prevent any impedance discontinuity. If for any reason, plane voids are created beneath a high-speed trace, stitching capacitors should be used to create a return path. The use of stitching capacitors is also recommended if the power plane is also the reference plane for a high-speed signal that creates a return path to the current source. These rules are illustrated in Figure 21 below, showing bad practices on the left and good practices on the right.

Stack-Up

For improved EMI/EMC performance and to make routing of impedance-controlled traces easier, it is a good idea to have at least four layers (e.g., Top - Ground - Power/Ground - Bottom). This does not mean that it is impossible to use a two-layer PCB for a gigabit Ethernet interface. If a solid reference plane is provided for critical signals, guard traces are routed for MDI signals, and finally, if there is no requirement for EMI/EMC compliance, then it would most probably work on a lab bench. Two-layer boards should really only be used for experimentation and prototyping however, as four-layer boards are only slightly more expensive than a two-layer at most manufacturers these days - the benefits of a 4+ layer board are worth the minor additional expense.

Trace Properties and Geometry

Each trace on the PCB will have a characteristic impedance, calculated with respect to its reference plane. Altium has impedance calculation tools built-in; however, for high-speed signals, there are many other tools to assist with simulating the performance and verifying calculations. There are a lot of mathematical formulas as well as calculation tools available, such as the “Saturn PCB Tool (Free)” and a licensed tool offered by Polar Instruments that can perform these calculations.

The required trace width and dielectric spacing may be easily calculated for the required impedance according to the PCB stack-up. Generally speaking, using 45° bends is preferable to using 90° bends. At the same time, traces, serpentines, and pairs are better if they are separated as much as possible to prevent any crosstalk and to increase their glitch immunity. Also, the use of stubs should be avoided. Finally, to prevent crosstalk between adjacent layers, any parallel signal routing along layers should be avoided unless there is a solid plane between them. These rules are illustrated in Figure 22 below, showing bad practices on the left and good practices on the right.

Transmission Lines

We know that a microstrip patch and slot antennas are designed to create electromagnetic fields for transmission and reception deliberately. A poorly designed PCB may also inadvertently have many unintentional antennae that radiate at different frequencies. If the trace is a transmission line, then reflections may be a really big problem. When laying out traces, the designer should roughly estimate if the trace length could act as an antenna and turn the conducted signal into a radiated signal and if a termination resistor is needed to prevent any reflections. The following examples, based on some rules of thumb, will explain these issues.

First, think about the antenna problem. The highest levels of radiation are obtained if the antenna trace length is λ/4, λ/2, or λ. However, if the length is shorter than around λ/20 of the carrier frequency, then no antenna effect is expected to be observed. As a rule of thumb, we use a figure of λ/40 for the maximum length to be on the safe side.

The second problem comes from the signal rise time, as it is directly related to the bandwidth. The sharper the edges, the higher the bandwidth. For a microstrip configuration on an FR4 board, the signal travels at a speed of 6.146 ps/mm. Thinking about a signal that has a rise time of 340 ps, the trace may be un-terminated if it is shorter than a length of (1/10)*(340/6.146) = 5.53mm. It is always better to have a termination resistor, but a shorter trace means there should be no issues with reflections and standing waves.

Since the principles behind high-speed Ethernet circuit layout design are a massive topic, it is nearly impossible to touch on all the aspects of it in this brief article. Just as the generic rules-of-thumb are mentioned briefly, the following table provides some typical Gigabit Ethernet layout restrictions and requirements.

Table 4. Gigabit Ethernet Layout Requirements

| Interface | Parameter | Requirement |

|---|---|---|

| MDI | Trace Impedance | 100 Ω Differential (95 Ω ±15%) |

| MDI | Termination Requirement | Parallel Termination (100 Ω or split 2 x 49.9 Ω) |

| MDI | Max. Intra-Pair Skew | <1.6 ps ~250 um |

| MDI | Max. Inter-Pair Skew | <330 ps ~50 mm |

| MDI | Max. Trace Length Between PHY and Magnetics | <~100 mm (shorter is better) |

| MDI | Min. Pair-to-Pair spacing | > 450 um |

| MDI | Max. Allowed Via | 2 vias for all MDI traces |

| xMII | Trace Impedance | 50 Ω Single (50 Ω ±15%) |

| xMII | Termination Requirement | Series Termination (20 Ω to 40 Ω according to the driver output impedance) |

| xMII | Max. Drive Load | 35 pF - These interface outputs are not designed to drive multiple loads, connectors, or cables. It is better if they are used onboard. |

| xMII | Recommended Max. Trace Length | 50 mm |

| xMII | Max. Trace Length | 150 mm – only if all traces are placed on the inner layers (not recommended) |

| xMII | Length Matching Tolerance | 10 mm - TX signals with TXC(TXCLK) and RX signals with RXC(RXCLK) |

In addition to these specified restrictions, the discrete magnetics layout may also need special care. A separate ground plane should be created to provide improved ESD and EMI/EMC immunity, and it should be strictly separated from all other planes by at least 2 mm (See Figure 23).

The purpose of this article is to guide any designer who is looking to add gigabit Ethernet pinout interfaces to their circuit boards, and we have tried to cover all the major theoretical aspects. The Altium Learning Hub has many articles that dive into more depth on high-speed routing, Ethernet matching, and other topics related to the successful routing of gigabit Ethernet and other high-speed circuit signals. This guide should provide you with a good foundation of how high-speed routing techniques apply to gigabit Ethernet pinout specifically.

While I’ve tried to provide a good guide to the basics of successful gigabit Ethernet routing, it’s always a good idea to follow the recommended layout and guidelines in the datasheet of the ICs you are working with. As a follow-up to this article, we’ll be looking at setting up design rules specifically for gigabit Ethernet. Having the right design rules can make the difference between a painful routing and frustrating prototyping/testing experience and Altium forcing your design to be successful.

Working with gigabit Ethernet can be challenging your first time, however no more so than any other high-speed circuit interface. The requirements of gigabit Ethernet implementations are probably the most forgiving when it comes to high-speed interfaces. By using good layout and routing practices, as well as having the correct termination and other component selections in your schematic your design is likely to be highly successful. Using 4 or more layers in your Ethernet circuit board will greatly ease the routing of your design, increasing your chance of success. This can also help ensure you're able to follow the various grounding schemes in gigabit Ethernet.

Would you like to find out more about how Altium can help you with your next PCB design? More questions about Ethernet differential impedance? Talk to an expert at Altium.